The core of the issue lies in the application of a narrow tool to solve broad, complex business problems. A/B testing is exceptionally effective at answering focused, binary questions: which of these two headlines resonates more, or which image layout reduces friction? However, it is fundamentally ill-equipped to address systemic issues such as brand positioning, unclear value propositions, confusing navigation structures, or complex pricing strategies. When teams treat A/B testing as a panacea, they often find themselves "polishing the brass on a sinking ship," optimizing elements of a fundamentally broken user experience rather than addressing the root causes of underperformance.

The Historical Context and Normalization of A/B Testing

The rise of A/B testing as the default industry standard can be traced back to the early 2000s, popularized by tech giants like Google and Microsoft. A famous case study from Microsoft’s Bing team serves as a primary example of the method’s potential. By merging two ad title lines into a single, longer headline, the team saw a click-through rate increase that generated over $100 million in additional annual revenue. Such high-profile successes established a cultural norm within the tech sector: every change, no matter how small, should be validated through a controlled experiment.

Today, Microsoft reportedly conducts more than 20,000 experiments annually across its Bing platform alone. This culture of experimentation was democratized by the arrival of platforms like Optimizely, VWO, and FigPii, which simplified the technical requirements for launching tests. However, this democratization has come with a trade-off. According to industry statistics, approximately 77% of all digital experiments are simple A/B tests involving only two variants. This suggests that the majority of teams are defaulting to the simplest possible approach, regardless of whether it is the most informative or appropriate for the business challenge at hand.

The Statistical Reality: Why Small Teams Struggle

One of the most significant, yet frequently ignored, hurdles in A/B testing is the requirement for statistical power. To detect a small difference—such as a 1% or 2% lift—with a high degree of confidence, a website needs hundreds of thousands of visitors per variant. For many e-commerce brands, even those generating significant monthly sessions, achieving this level of traffic within a reasonable timeframe is impossible.

When traffic is insufficient, teams often fall into three common failure modes. First, they may end the test too early, resulting in "false positives" where a perceived win is actually just statistical noise. Second, they may run tests for too long—six to twelve weeks—which slows down the pace of learning and prevents the team from making agile decisions. Third, they may focus exclusively on "big swings" that are likely to produce larger, detectable effects, thereby ignoring the nuanced improvements that contribute to a polished brand experience.

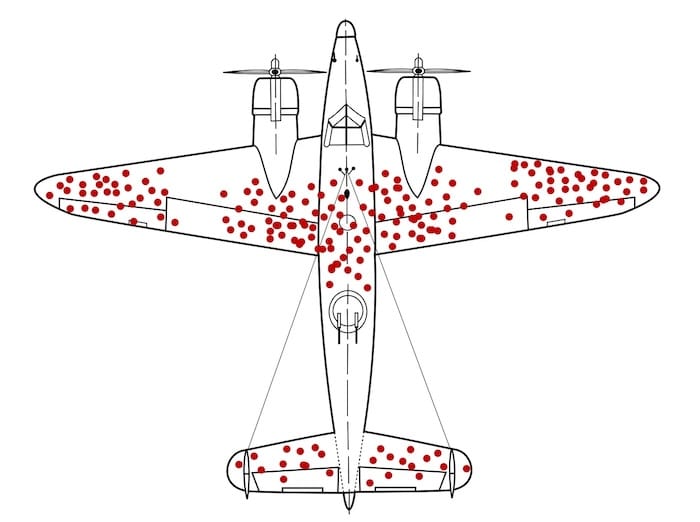

The "Survivorship Bias" in Data Interpretation

A critical analytical flaw in over-reliant A/B testing is the tendency to focus only on the "survivors"—the users who successfully navigate the funnel. This mirrors the famous World War II story of statistician Abraham Wald and the military’s analysis of returning aircraft. While the military wanted to reinforce areas where planes showed the most bullet holes, Wald pointed out that they were only seeing the planes that survived. The planes that were hit in the engines never returned to be analyzed.

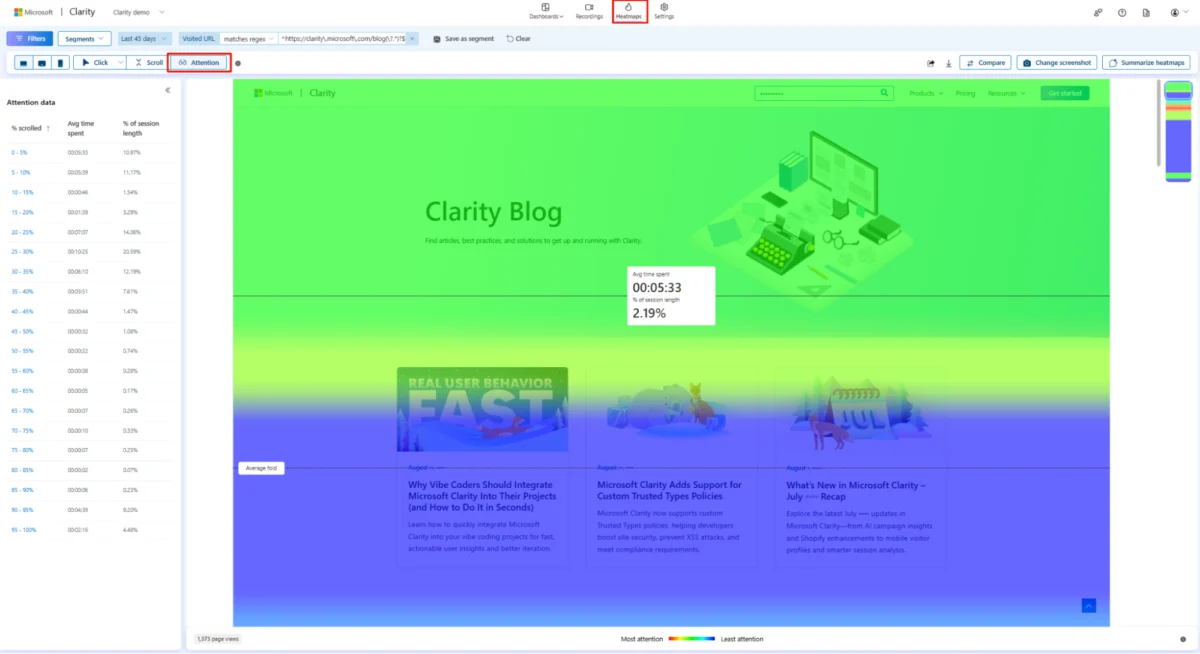

In a digital context, an A/B test tells a team which version performed better among those who engaged, but it rarely explains why users dropped off. A variant might "win" because it forced a user into a specific action, not because it improved their confidence or satisfaction. Without qualitative insights—such as session recordings, heatmaps, and customer feedback—teams are essentially optimizing for "bullet holes" while ignoring the structural vulnerabilities that cause the majority of their traffic to disappear.

Short-Term Uplifts vs. Long-Term Business Health

The most dangerous aspect of the A/B testing trap is the conflict between immediate conversion metrics and long-term business outcomes. Most tests measure actions within a single session: clicks, add-to-carts, or immediate purchases. However, e-commerce health is predicated on Lifetime Value (LTV), repeat purchase rates, and profit margins.

Consider a scenario where a team tests a "buy one, get one free" offer. The test will almost certainly show a massive spike in conversions. However, if that offer attracts low-quality customers who never return, or if it erodes the brand’s perceived value and destroys profit margins, the "win" is actually a long-term loss. High-maturity teams recognize that a lift in today’s conversion rate can sometimes lead to a decline in tomorrow’s loyalty. This is often illustrated by the "Jam Experiment," a psychological study showing that while offering 24 varieties of jam attracted more browsers, offering only six varieties led to significantly more actual purchases. Over-testing can lead to "choice overload" or "clickbait" UX that boosts short-term engagement at the expense of genuine customer intent.

Moving Toward High-Maturity Experimentation

Organizations that have successfully moved beyond basic A/B testing utilize a much broader toolkit. High-maturity experimentation involves matching the design of the experiment to the nature of the business question.

- Sequential Testing and Holdout Groups: For long-term impact analysis, mature teams use holdout groups—a small percentage of users who are kept away from a new feature for months to see how it affects retention and LTV over time.

- Switchback and Quasi-Experiments: In environments where users interact (like marketplaces or delivery apps), simple A/B splits can cause data "leakage." Switchback tests, which alternate the experience for the entire market over specific time intervals, provide cleaner data for algorithmic or pricing changes.

- Research-Driven Hypotheses: Rather than testing random ideas from a backlog, high-maturity teams ground every test in evidence. A robust hypothesis follows a strict format: "Because we saw [X evidence from research], we believe [Y user problem exists], so we will [Z change], and we expect [Metric] to improve."

Strategic Implications for the Future of CRO

The shift from "A/B testing" to "Experimentation Strategy" reflects a broader evolution in the digital economy. As customer acquisition costs (CAC) continue to rise across platforms like Meta and Google, businesses can no longer afford to rely on guesswork or incremental UI tweaks. The focus is shifting toward "Testing Big Levers"—changes that affect how users understand, trust, and value a product.

Expert analysis suggests that the most impactful experiments are those that address the "core decision moments." This includes testing the fundamental value proposition, the transparency of the pricing model, or the clarity of the shipping and return policies. For example, providing delivery-date estimates on a product page addresses a core user anxiety (uncertainty) far more effectively than changing the color of the "Add to Cart" button.

In conclusion, while A/B testing is a vital tool, it is only one part of a sophisticated growth strategy. To achieve meaningful results, digital teams must integrate qualitative research, respect statistical limitations, and align their experiments with long-term business health rather than short-term session metrics. The transition from a low-maturity "test everything" culture to a high-maturity "strategic experimentation" culture is often the difference between a brand that plateaus and one that achieves compounding growth. Organizations seeking to audit their processes should look beyond their win rates and instead evaluate the quality of their hypotheses and the depth of the business questions they are daring to ask.