For years, video content existed in a peculiar state of digital semi-visibility, often referred to as a "black box" within the search ecosystem. While marketers could meticulously craft titles, descriptions, and tags, the actual spoken words, visual cues, and on-screen text within an eight-minute explainer or a two-minute product demonstration remained largely inscrutable to search engines. This meant that the rich, carefully scripted narratives and deep insights embedded within video assets were inaccessible for granular indexing, limiting their discoverability to superficial metadata. However, this era of video obscurity is rapidly drawing to a close, driven by an unprecedented convergence of artificial intelligence technologies.

The mechanics of search are undergoing a profound evolution. AI-driven video indexing, powered by sophisticated large language models (LLMs), advanced computer vision (CV), and highly accurate automatic speech recognition (ASR), is fundamentally transforming how digital content is processed and retrieved. These cutting-edge systems now treat video not merely as a distinct media file, but as a rich, multi-layered data source akin to readable text. Search engines and recommendation algorithms are now capable of analyzing everything from spoken dialogue and on-screen captions to embedded text on slides, brand logos, and even the sentiment conveyed through visual cues. This profound shift has catapulted video into what can be termed "SEO 2.0," establishing it as a fully discoverable format capable of ranking and surfacing precise answers with the same granularity and authority as a traditional blog post or written article.

For content strategists and marketing teams, this paradigm shift necessitates an entirely new operational framework. If video is now as comprehensively indexable as written content, the imperative is to develop and implement a robust "video retrievability" strategy. This strategy must ensure that video assets are not merely uploaded, but meticulously optimized to appear prominently when users actively search for solutions to the problems that a product or service is designed to address. The days of treating video as a secondary content format, distinct from core SEO efforts, are over.

The Mechanics of a New Era: How AI Deconstructs Video Content

The transformation of video discoverability is not a singular event but the culmination of several technological breakthroughs converging. At its core, the ability of AI to "understand" video stems from its capacity to extract meaning from multiple data layers simultaneously:

- Automatic Speech Recognition (ASR): This technology converts spoken language within videos into text transcripts. Modern ASR systems have achieved remarkable accuracy, even in challenging audio environments, allowing search engines to parse every word uttered by presenters, interviewees, or voiceovers. This makes the entire verbal narrative of a video searchable, moving beyond just keywords in a description.

- Computer Vision (CV): Beyond audio, computer vision allows AI to "see" and interpret visual elements. This includes identifying text displayed on screen (e.g., lower thirds, slide bullet points, product labels, calls to action), recognizing objects, faces, brands, and even understanding actions or events occurring within the frame. For instance, a CV system can identify a specific software interface being demonstrated or a particular technique being performed.

- Large Language Models (LLMs): Once ASR and CV have extracted textual and visual data, LLMs process this raw information. These models are trained on vast datasets of text and code, enabling them to understand context, identify key themes, summarize complex information, and answer natural language queries. LLMs connect the dots between spoken words, on-screen text, and visual context to build a holistic understanding of the video’s content and intent.

This multi-layered analysis represents a dramatic departure from the old world of video SEO, where discoverability was largely contingent on superficial signals like compelling thumbnails, generic tags, and basic titles. Previously, a video might rank for a broad topic, but search engines couldn’t pinpoint specific moments within it. Now, every meaningful segment—from an initial overview of a complex framework to a detailed example at minute 3:42, or a crucial term typed on a screen—can be read, understood, and indexed by AI.

This capability forms the bedrock of retrievability: a search engine’s enhanced ability to precisely locate, interpret, and surface highly specific insights or answers from within a video. It allows AI to not just know what a video is about, but what specific information it contains at any given timestamp.

Beyond Traditional Search: Video in the Generative AI Landscape

The impact of AI on video extends far beyond traditional keyword-based search. Generative search engines, exemplified by platforms like Google’s AI Overviews, Perplexity AI, and even capabilities within ChatGPT, take video processing a step further. These systems are designed to synthesize information from a diverse array of sources—text articles, video clips, audio segments, and images—into a single, coherent, and often personalized synthesized answer.

In these advanced environments, video is no longer treated as an isolated format. Instead, it becomes one valuable data source among many that an LLM leverages to construct the most authoritative, comprehensive, and relevant response to a user’s query. This integration is why video citations are increasingly appearing directly within AI-driven answers. A relevant YouTube clip might be embedded within a Google AI Overview as supporting material, offering a visual or auditory explanation to complement textual summaries. Similarly, platforms like TikTok, known for its short-form video content, are developing "Search Highlights" that pair trending queries with brief, highly relevant video clips, demonstrating the platform’s ability to understand the granular content of its vast video library. ChatGPT and Perplexity are also increasingly pulling structured insights and direct quotes from videos that are properly indexed and easily parsable, integrating them into their generative responses.

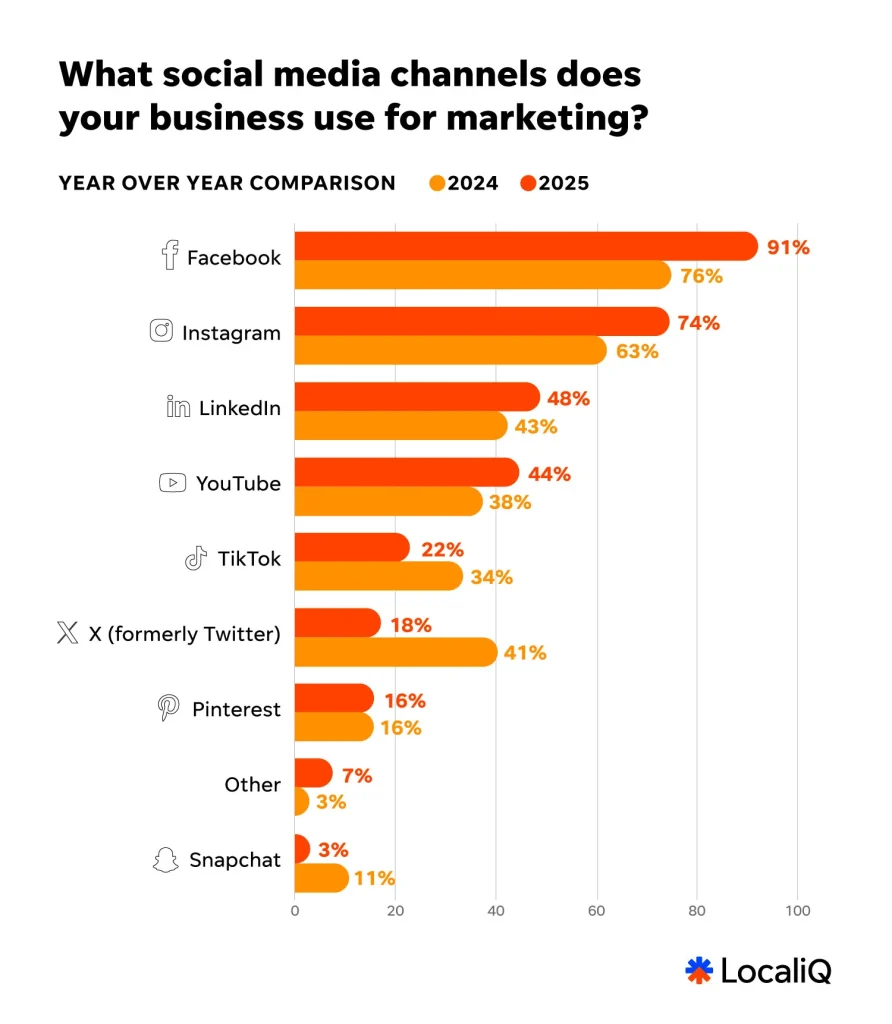

For brands and content creators, this development underscores the critical importance of multi-format coverage. If an organization’s expertise is exclusively confined to blog posts or written reports, it creates a significant visibility gap in the evolving search landscape. Conversely, if video assets are not meticulously optimized for AI-driven retrieval, they risk being overlooked by the generative answers that are increasingly shaping consumer decisions and information consumption patterns. Data consistently shows the surging dominance of video; according to Wyzowl’s State of Video Marketing report, 92% of marketers say video gives them a good ROI, and 88% of people say they’ve been convinced to buy a product or service by watching a brand’s video. As AI becomes the primary interface for information discovery, unoptimized video becomes a missed opportunity for connection and conversion.

A Strategic Imperative: Optimizing Video for AI Search

Given that video is now discoverable at an unprecedented dialogue and visual level, content teams must evolve their optimization strategies beyond superficial metadata. The focus must shift to making video content intrinsically readable and understandable by AI systems. Here’s how to ensure videos perform like high-value, high-ranking content:

-

Think of Your Script as Both Narrative and Index: The script is no longer just a blueprint for filming; it’s a critical SEO document. Write video scripts with the same strategic intent and precision applied to an optimized blog post. This means employing clear, concise phrasing, naturally integrating long-tail questions, and strategically front-loading key terms in a way that feels conversational and organic.

The "conversational" element is paramount because LLM-powered search engines prioritize natural language processing. Instead of a formal introduction like, "Today, we will discuss customer acquisition strategies," opt for phrasing that mirrors how users actually search: "How do you acquire customers without spending a fortune on ads?" The latter provides AI systems with a much clearer signal about the specific problem the video aims to solve, improving its chances of appearing in relevant generative answers. If a concept is being explained, state it plainly and early in the video. Ambiguity, while sometimes effective for artistic storytelling, actively hinders retrievability in an AI-driven search environment. This demands a discipline of clarity and directness in content creation. -

Get Serious About Metadata Hygiene: While AI delves deeper than metadata, accurate and strategic metadata remains foundational. Titles, descriptions, and tags must precisely reflect the user problem the video solves, not just its broad topic. The emphasis should be on clarity, user intent, and value proposition rather than mere keyword stuffing, which modern AI algorithms can detect and penalize.

For example, a title like "Content Marketing Tips | SEO | Video Strategy | 2025" is generic and less effective. A superior alternative would be "How to Make Your Marketing Videos Discoverable in AI Search." The latter is specific, immediately communicates value, and aligns with potential user queries. This meticulous approach to metadata applies universally across all video platforms, from YouTube and TikTok to LinkedIn and internal knowledge bases. Consistent, high-quality metadata signals relevance to AI. -

Make Your Transcript the Most Accurate Version of Your Video: Full transcripts or SRT (SubRip Subtitle) files are no longer optional add-ons; they are critical ranking signals. Well-formatted, accurate transcripts are invaluable to AI systems, helping them disambiguate topics, identify key takeaways, and match your content to nuanced or niche queries that might not be captured in titles or descriptions.

Transcripts are particularly effective at capturing long-tail queries. Imagine a user searching for "how to handle objections in sales calls with technical buyers." Your video, titled "Advanced Sales Techniques," might not immediately surface. However, if that exact phrase appears at minute 12 in your video’s transcript, AI can pinpoint that moment and present your video as a highly relevant resource. Maintain transcript cleanliness by removing excessive filler words if they genuinely obscure meaning, but avoid over-editing. LLMs are trained on natural language, so authentic phrasing is often beneficial. Tools for automated transcription have become highly sophisticated, but a human review for accuracy remains a best practice. -

Think of On-Screen Text as a Secondary Layer of Indexable Content: Everything visibly displayed on screen—callouts, lower thirds, slide text, product labels, annotations—is now crawlable and indexable by computer vision. This presents a massive opportunity to reinforce spoken points and add another layer of discoverability. If a video introduces a specific framework, ensure the name of that framework appears visually. If a statistical data point is cited, display it clearly on screen in readable text.

However, caution is advised against "text spam"—cluttering the video with keywords solely for crawlability. Instead, be intentional: ensure that key terms, crucial takeaways, and core concepts appear both verbally and visually when relevant. This dual reinforcement significantly enhances AI’s ability to understand and categorize your content, boosting its retrievability.

The Broader Impact and Future Landscape

The rapid evolution of AI-driven video indexing signals a fundamental shift in the content marketing landscape. What began as rudimentary metadata analysis has quickly progressed to sophisticated, multi-modal understanding, pushing video to the forefront of search innovation. This timeline has been compressed, with significant advancements occurring within just the past few years, spurred by the widespread adoption and development of LLMs.

Implications for Content Strategy:

- Integrated Content Planning: Brands must adopt a truly integrated content strategy, where video is not an afterthought but an integral part of the initial planning phase, designed from the outset for AI retrievability alongside written content.

- New Skill Sets: Marketing teams will require new competencies, including advanced scriptwriting for AI, metadata specialists who understand semantic search, and video producers who can strategically incorporate on-screen text and visual cues.

- Performance Measurement: New metrics will emerge to track video performance in AI search, moving beyond simple views to measure granular engagement with specific segments identified by AI.

Competitive Advantage: Organizations that embrace these strategies early will gain a significant competitive advantage. By making their video content inherently discoverable, they can capture a larger share of voice in generative AI answers, build authority, and drive targeted traffic.

Ethical Considerations: While highly beneficial, the rise of AI in content indexing also presents challenges. The potential for AI to misinterpret context, the need for robust content moderation, and ensuring the accuracy and bias-free nature of AI-generated summaries will require ongoing attention and development. Human oversight remains crucial to guide AI and ensure quality.

Industry Reactions: SEO professionals are rapidly adapting, with many now specializing in multi-modal SEO. Content platforms are continually enhancing their AI capabilities, offering more sophisticated analytics and optimization tools. Brands are increasingly investing in AI-powered content creation and optimization suites to keep pace with these changes.

Practical Checklist: Your Video Retrievability Toolkit

To effectively navigate this new landscape, here’s an actionable implementation guide for making video content discoverable in AI-powered search:

- Strategic Scriptwriting: Craft scripts that prioritize clarity, natural language, and direct problem-solving phrasing. Integrate relevant keywords conversationally.

- Meticulous Metadata: Ensure titles, descriptions, and tags are precise, intent-driven, and free of keyword stuffing. Focus on what problem your video solves.

- High-Quality Transcripts: Upload accurate, clean, and complete transcripts (SRT files) for every video. Review automated transcripts for precision.

- Intentional On-Screen Text: Use callouts, lower thirds, and slide text strategically to reinforce key terms, data, and concepts that also appear verbally.

- Clear Visual Cues: Ensure visual demonstrations are unambiguous and directly illustrate the spoken content.

- Multi-Platform Consistency: Apply these principles across all platforms where your video content resides (YouTube, Vimeo, TikTok, LinkedIn, etc.).

- Auditing Existing Content: Conduct an audit of your existing video library to identify opportunities for retrofitting with improved transcripts, metadata, and on-screen text where feasible.

This should be viewed as an evolving practice. As AI search tools become more sophisticated, the precise methods they employ to index, understand, and cite video will continue to shift. However, the core principle remains constant: making your content effortlessly findable, comprehensible, and referenceable by intelligent systems.

The black box of video content has been opened by AI. Search engines are rapidly learning to see, hear, and cite every nuance within your videos. The power to unlock unprecedented discoverability is now at the fingertips of content creators and marketers. What is done with that power will define the next generation of digital content strategy.

Frequently Asked Questions (FAQs)

How long should my video be for optimal discoverability in AI Search?

There is no universal "best length." Clarity, structure, and directness matter more than duration. Shorter videos (e.g., 15-90 seconds) excel for intent-matching on platforms like TikTok and YouTube Shorts, addressing immediate queries. Longer, well-structured explainers (e.g., 5-15 minutes) provide more granular material for generative AI answers to pull from, offering deeper insights. The key is to match the length to the content’s purpose and user intent.

Do I need special tools to make my videos indexable by AI Search?

Not necessarily. Most crucial optimization steps—clean scripting, accurate transcripts, readable on-screen text, and precise metadata—can be managed during the standard production and upload processes using existing video editing software and platform interfaces. While advanced AI content optimization tools are emerging, the fundamental signals for AI indexing are generated through meticulous content creation practices. AI search engines handle the complex indexing automatically if these clear signals are present.

How quickly will I see results from video retrievability efforts?

Indexing timelines can vary significantly by platform and the sophistication of the search engine. However, many brands begin to observe improvements in visibility and discoverability within weeks or a few months, particularly for new, well-optimized content. The most substantial and sustained gains typically come from consistency: implementing unified naming conventions, regularly publishing across multiple formats, and reinforcing your expertise with high-quality, supporting written content that links to and references your video assets. This holistic approach strengthens your overall digital authority.

What about older video content? Can it be optimized for AI search?

Yes, older video content can and should be optimized. While re-editing videos to add on-screen text might be labor-intensive for a large library, improving transcripts and metadata is highly impactful and often more feasible. Auditing your existing video library to add accurate SRT files, enhance descriptions with problem-solution phrasing, and update tags can significantly boost the retrievability of legacy content without requiring full re-production. This retrofitting process is crucial for maximizing the value of your entire content archive.