The global developer community has reached a significant turning point with the release of Google’s Gemma 4, an open-source artificial intelligence model family that challenges the performance of proprietary systems twenty times its size. Unlike traditional large language models (LLMs) that primarily serve as cloud-based chatbots, Gemma 4 has been engineered specifically for advanced reasoning and autonomous agentic workflows. This release marks a strategic shift in the AI industry, moving away from centralized, subscription-based cloud services toward high-performance, local execution that functions entirely without an internet connection. By optimizing for edge devices, Google has provided a framework where AI can handle complex, multi-step tasks directly on a user’s hardware, fundamentally altering the privacy and cost dynamics of AI development.

The Evolution of the Gemma Family and the Open Source Shift

The arrival of Gemma 4 in early April 2026 follows a rapid iteration cycle from Google DeepMind, building upon the foundations of the previous Gemma iterations. While Google’s Gemini serves as the company’s flagship proprietary model, the Gemma series was conceived to provide the open-source community with "open weights" models derived from the same technology. Gemma 4 represents the most sophisticated version of this lineage, designed to bridge the gap between "small" models and "frontier" capabilities.

The industry context for this release is a growing demand for "Local AI." As API costs for models like GPT-4 or Claude 3.5 Opus continue to accumulate for enterprises, the ability to run a model with comparable reasoning capabilities on local infrastructure becomes a financial necessity. Google’s decision to release Gemma 4 in four distinct sizes—ranging from lightweight 2B parameters to more robust 31B versions—ensures that the model is accessible across a spectrum of hardware, from mobile phones to high-end workstations.

Technical Specifications and Benchmark Performance

Gemma 4’s primary distinction lies in its "intelligence-to-size" ratio. According to technical documentation, the model features a 128K context window, allowing it to process and retain vast amounts of information in a single session. This is particularly relevant for "agentic" tasks, where the model must remember previous steps in a complex workflow or analyze large code repositories.

Key technical milestones include:

- Multimodality: Gemma 4 is natively multimodal, capable of processing text, audio, and visual data simultaneously.

- Tool Use and Function Calling: The model is optimized for "Agent Skills," allowing it to interact with external APIs, execute code, and navigate file systems.

- Efficiency: The E4B (4-bit quantized) version can perform comprehensive repository audits on systems with as little as 6GB of RAM, making it viable for standard consumer laptops.

- Reasoning: In internal benchmarks, Gemma 4 models have demonstrated the ability to outperform much larger open-source predecessors in logical deduction and mathematical problem-solving.

Chronology of Early Adoption: From Mobile to Gaming Consoles

Within days of its release, the developer community began documenting a wide array of implementations that demonstrate the model’s versatility. The following timeline illustrates how quickly the model was integrated into various hardware environments.

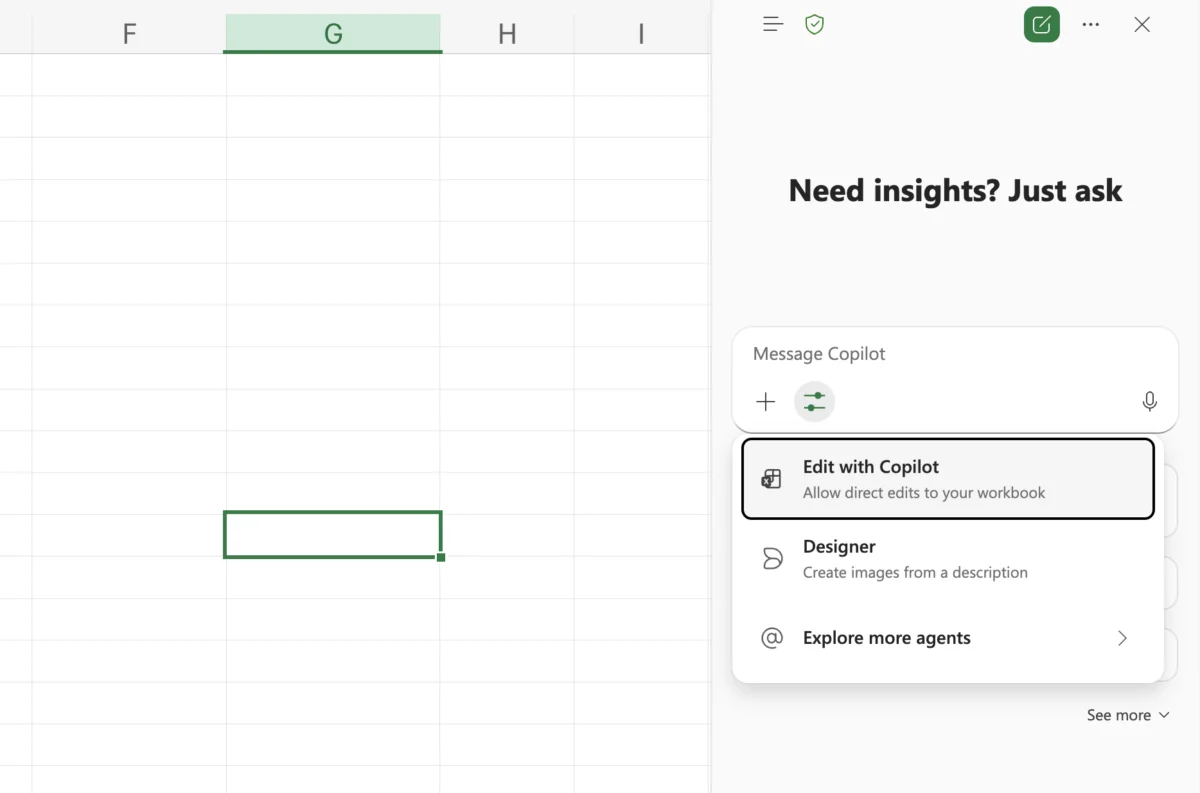

Localized Developer Environments and Claude Code Integration

One of the earliest breakthroughs involved integrating Gemma 4 with "Claude Code" workflows. By utilizing Ollama—a tool designed for running LLMs locally—developers successfully mirrored the experience of high-end coding assistants without the associated subscription fees or API latency. This setup allows for real-time code fixing and architectural understanding on a local machine, ensuring that proprietary source code never leaves the developer’s environment.

The Mobile Frontier: iPhone and Pixel Implementations

By the end of the first week of April 2026, researchers demonstrated Gemma 4 running natively on mobile hardware. Implementation on the iPhone showed the model handling 128K context windows entirely offline. Simultaneously, on the Pixel 10 Pro, the model was used for real-time audio transcription. This specific use case utilized the model’s "E2B" variant to process 30-second audio clips locally, providing a glimpse into a future where voice assistants do not require cloud connectivity to function.

Unconventional Hardware: The Nintendo Switch Experiment

Perhaps the most surprising implementation was the successful deployment of Gemma 4 on a Nintendo Switch. Although the execution speed was limited to approximately 1.5 tokens per second, the experiment proved that modern, agent-ready models could be squeezed into gaming hardware. This suggests that future interactive media and video games could utilize local AI for dynamic storytelling and NPC (non-player character) intelligence without relying on external servers.

Transforming the Economic Model: The "Zero-Token" Workstation

A significant portion of the excitement surrounding Gemma 4 is rooted in the "token economy." Traditionally, AI development has been gated by the cost of tokens—fractions of words processed by cloud providers. For startups and independent researchers, these costs can reach thousands of dollars per month.

The transition to a Mac Studio-based "AI Workhorse" using Gemma 4 31B has emerged as a viable alternative. By running the model locally, users have reported reducing their monthly AI expenses to zero. This "Zero-Token" model allows for iterative testing and "crazy ideas" that would otherwise be cost-prohibitive. Furthermore, the use of OpenClaw—an open-source interface—allows this local setup to behave with the fluidity of a professional-grade AI assistant, handling everything from calendar management to automated messaging across a user’s contact list.

Real-Time Vision and Agentic Skills

Gemma 4’s ability to act as a "visual brain" has been demonstrated through integrations with computer vision platforms like Roboflow. In these setups, Gemma 4 acts as a reasoning layer for object detection models. While a primary model identifies objects in a camera feed, Gemma 4 provides a "scene summarization," explaining the context of what is happening in real-time. This has immediate implications for assistive technologies, security systems, and industrial monitoring.

Google has further supported this ecosystem by launching "Agent Skills" for Android. This application allows users to import specific "skills" or capabilities into the Gemma 4 E2B model. For example, an agent can be taught to monitor a user’s schedule and autonomously draft emails or generate music based on visual inputs using Lyria 3.

Official Responses and Industry Implications

Omar Sanseviero, Developer Experience Lead at Google DeepMind, emphasized that the goal of Gemma 4 was to provide "firepower" for multi-step tasks on personal hardware. The sentiment among industry analysts suggests that Google is successfully positioning itself as the leader in the "Small Language Model" (SLM) space, a sector that is becoming increasingly crowded with entries from Meta (Llama) and Mistral.

The broader implications of Gemma 4 include:

- Data Sovereignty: By keeping processing local, enterprises can comply with stricter data privacy regulations (such as GDPR) more easily, as sensitive information is never transmitted to a third-party server.

- Democratization of Research: High-level AI reasoning is no longer restricted to those with massive cloud budgets or enterprise-grade GPU clusters.

- Hardware Evolution: The success of Gemma 4 is likely to accelerate the demand for "AI PCs" and mobile devices with dedicated NPU (Neural Processing Unit) hardware designed specifically for local inference.

Conclusion: The New Standard for Local Intelligence

The rapid adoption of Gemma 4 indicates that the AI industry is moving into a "post-chatbot" era. The focus has shifted from simple text generation to "agentic" behavior—AI that can see, hear, reason, and act within a local environment. Whether it is auditing a code repository on a 6GB RAM system or generating a song from an image on a mobile device, Gemma 4 has proven that size is no longer the sole determinant of an AI’s utility.

As developers continue to experiment with these open weights, the barrier between human intent and machine execution continues to thin. Gemma 4 is not merely a model release; it is a foundational shift toward a more private, cost-effective, and decentralized AI future. The real-world projects emerging in the wake of its launch suggest that the most impactful AI of the future may not live in a massive data center, but in the pocket of the user.