Six months ago, a comprehensive guide on data security best practices was published by a leading tech firm. Today, its policies have evolved, yet the original article remains untouched. When a customer recently posed a routine query to the company’s support chatbot, the AI, drawing from this now-obsolete guide, confidently dispensed incorrect advice. This incident necessitated a swift intervention from the human support team, tasked with the unenviable duty of explaining why an official brand answer was fundamentally flawed and outdated. This scenario is no longer an anomaly but a growing concern, illustrative of the escalating risks businesses face as artificial intelligence integrates deeper into customer service, e-commerce, and proprietary search functionalities.

Large Language Models (LLMs), the backbone of these AI systems, are trained and pull information from vast repositories of published brand materials to inform user questions and influence purchasing decisions. Consequently, content that is outdated, inaccurate, or incomplete now carries severe and far-reaching consequences. This emerging threat is not going unnoticed in corporate boardrooms. According to a striking October 2025 analysis by The Conference Board, a staggering 72% of S&P 500 companies now formally identify AI as a material business risk. This represents an alarming six-fold increase from just 12% in 2023, underscoring the rapid escalation of this challenge. The pressure on content teams, traditionally focused on engagement and reach, has intensified dramatically; their work now underpins critical operational integrity and carries significant legal and reputational responsibility.

The Unseen Threat of AI-Driven Content Misinformation

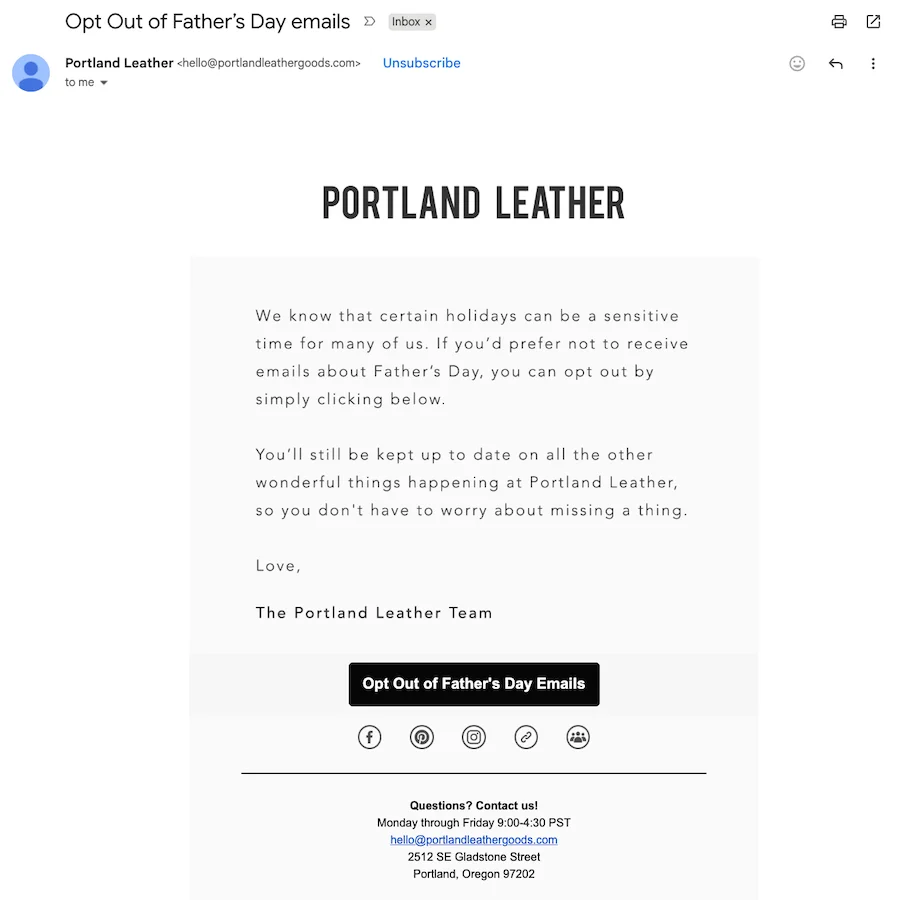

The core of the problem lies in the inherent design of many AI systems. Unlike human researchers, AI does not inherently distinguish between a brand’s latest product update and a blog post from five years ago; it often treats all indexed content as equally valid source material. This lack of temporal and contextual discernment creates a compounding problem. When powerful generative AI tools such as ChatGPT, Perplexity, or Google’s AI Overviews draw from a company’s content library, crucial contextual elements—like publication dates, disclaimers, and nuanced qualifications—often disappear. The raw information is extracted, synthesized, and presented with an authoritative confidence that belies its potential inaccuracy.

This decontextualization is precisely what leads to scenarios like the support chatbot incident. Other common failure modes include AI chatbots making promises based on old pricing structures, offering discontinued product features, or providing legal or medical advice that no longer aligns with current regulations or scientific understanding. For industries operating under strict regulatory frameworks, such as financial services or healthcare, the exposure to risk is profound. Financial services firms could face severe SEC scrutiny and substantial fines for disseminating inaccurate investment advice or compliance information. Similarly, healthcare organizations navigating complex HIPAA implications could find themselves rectifying patient-facing guidance that, when delivered by an AI, inadvertently violates privacy protocols or provides harmful medical misinformation. The potential for legal challenges, regulatory penalties, and irreparable damage to public trust is immense.

A Rapidly Escalating Business Risk and the Paradigm Shift in Content’s Role

The data from The Conference Board serves as a stark warning. The jump from 12% to 72% in just two years reflects a seismic shift in corporate understanding of AI’s broader implications. Initially, many businesses viewed AI primarily through the lens of efficiency gains and innovation. However, as deployment scales and AI-driven interactions become commonplace, the inherent risks associated with data quality, algorithmic bias, and content veracity are coming into sharp focus. This realization is pushing AI risk management to the forefront of strategic planning, demanding attention from C-suite executives, legal counsel, and compliance officers, departments that historically had minimal direct involvement with content creation.

For content teams, this marks a profound paradigm shift. What was once primarily a marketing function, optimizing for metrics like page views, social shares, and conversion rates, has now evolved into a critical governance role. Content is no longer just about engaging an audience; it is about establishing and maintaining trust, ensuring factual accuracy, and mitigating operational and legal exposure. This expanded mandate requires a fundamental rethinking of content strategy, creation, distribution, and lifecycle management. The stakes have never been higher, as every piece of published content, regardless of its original intent or age, now serves as potential training data for an AI that could represent the brand with authoritative, yet potentially flawed, information.

Why AI Systems Struggle with Context and Chronology

The challenge stems from how current LLMs process and retrieve information. When an AI "reads" a website or an internal document library, it ingests text data without an inherent understanding of its publication date, its relationship to other pieces of content, or its hierarchical importance. A blog post from 2019 detailing a product’s initial features might be treated with the same weight as a 2024 press release announcing a significant product overhaul. The AI simply identifies patterns, keywords, and relationships within the textual data.

Furthermore, the sophisticated summarization and generative capabilities of LLMs, while powerful, often strip away the very elements that provide crucial context:

- Disclaimers: Legal or contextual disclaimers (e.g., "Information valid as of X date," "Consult a professional for specific advice") are frequently omitted in AI-generated summaries.

- Dates and Versioning: Publication dates, version numbers, or revision histories are rarely carried through into AI responses, making it impossible for a user to gauge the currency of the information.

- Nuance and Qualification: AI tends to present information concisely and confidently, often flattening the nuanced language, caveats, and qualifications that human authors carefully embed to ensure accuracy and prevent misinterpretation.

This technical limitation means that content teams must proactively embed clarity and currency into their materials in ways they never had to before. The onus is on the content creators and managers to build a robust framework that accounts for AI’s consumption patterns, rather than expecting AI to infer context that isn’t explicitly and consistently provided.

Case Study: The Air Canada Precedent and Corporate Accountability

A seminal example of the legal ramifications of AI-driven misinformation occurred with Air Canada. In a 2024 ruling by a British Columbia civil tribunal, the airline was found liable for damages after its website chatbot provided incorrect information regarding bereavement fares. The chatbot erroneously promised a discount that was not available under the company’s current policy. When the customer, relying on the chatbot’s assurance, was denied the discount by human agents, they pursued a claim. The tribunal’s decision was clear and impactful: Air Canada was held responsible for its chatbot’s statements, irrespective of how or where the inaccurate information originated.

This ruling established a critical precedent: companies are accountable for the information their AI systems disseminate, even if that information is generated autonomously from outdated internal resources. What began as a simple case of outdated guidance surfaced by an AI quickly escalated into a significant legal and public accountability issue for a major corporation. The financial penalty, while relatively small, signaled a much larger systemic risk, forcing companies globally to re-evaluate their digital communication channels and the underlying content that feeds them. The implications extend beyond direct financial damages, encompassing reputational harm, erosion of customer trust, and potential regulatory investigations.

Beyond Customer Service: Broader Exposure Across Industries

The risks of AI-driven misinformation are not confined to customer service interactions. They permeate various sectors with unique and profound implications:

- Financial Services: AI chatbots or knowledge bases offering investment advice based on outdated market conditions, tax laws, or product terms could lead to financial losses for customers and expose firms to regulatory fines from bodies like the SEC, class-action lawsuits, and severe reputational damage.

- Healthcare: AI-powered symptom checkers or patient information portals drawing from old medical guidelines, drug dosages, or treatment protocols could endanger patient health, leading to malpractice claims and HIPAA violations. The trust placed in medical information demands absolute accuracy.

- E-commerce: Misleading product descriptions, incorrect pricing, or false claims about stock availability, when generated by AI from stale product pages, can lead to customer dissatisfaction, returns, and legal challenges under consumer protection laws.

- Legal Services: AI tools used for legal research or client advice could cite superseded statutes, case law, or regulatory interpretations, leading to catastrophic errors in legal counsel.

- Manufacturing and Engineering: AI-driven internal knowledge bases providing outdated safety protocols, equipment specifications, or operational procedures could result in workplace accidents, product failures, and significant liability.

The pervasive nature of AI means that virtually any industry relying on accurate information for operations, customer interaction, or compliance is now susceptible to this new category of content risk.

The Unpreparedness of Traditional Content Teams

A significant hurdle in addressing this issue is that most content teams are simply not structured or equipped for this new mandate. Historically, content operations have optimized for metrics such as speed, volume, engagement, and traffic generation. Workflows are often designed for rapid publishing cycles, with editorial reviews primarily focused on brand voice, clarity, and SEO optimization. Legal approval processes, when they exist, are typically designed for discrete, time-bound marketing campaigns or specific product launches, not for the continuous, evergreen content libraries that AI systems now perpetually mine.

Furthermore, the question of ownership often becomes murky. Who is responsible for auditing and updating a three-year-old blog post when industry regulations change? Who takes accountability for help documentation when product features evolve rapidly? In many organizations, a clear, centralized accountability structure for the accuracy and currency of all published content, particularly older assets, simply does not exist. Content teams, positioned at the nexus of content creation and AI consumption, often lack the explicit mandate, the specialized tools, or the necessary headcount to manage this complex downstream risk effectively. This creates a vacuum where critical vulnerabilities can fester undetected.

The Financial and Reputational Toll of Inaccuracy

The costs associated with AI-driven content inaccuracy are multifaceted and often substantial. Beyond direct legal fines and penalties, companies face:

- Operational Overheads: The significant time and resources spent by support teams correcting AI errors, managing customer complaints, and manually verifying information.

- Brand Erosion: A decline in customer trust and loyalty when a brand’s official AI provides incorrect information, directly impacting customer retention and acquisition.

- Reputational Damage: Negative press, social media backlash, and a tarnished brand image that can take years to rebuild.

- Lost Revenue: Customers may abandon purchases or switch to competitors if they receive confusing or incorrect information from an AI.

McKinsey’s 2025 State of AI survey revealed that 51% of AI-using organizations have already experienced at least one negative consequence from AI deployment, with inaccuracy being the most commonly cited issue. This statistic quantifies the pervasive structural exposure that content teams, whether they planned for it or not, now implicitly own. The reactive cost of fixing misinformation after it has spread and caused damage is invariably far higher than the proactive investment in robust content governance.

Adapting to the New Reality: Proactive Content Risk Management

Organizations that are successfully navigating this complex landscape are not simply reacting to incidents; they are building proactive systems to manage content risk without sacrificing publishing velocity. A framework often referred to as the "Content Risk Triage System" is emerging, comprising four interlocking practices:

- Comprehensive Content Audits: Regular, systematic reviews of the entire content library, specifically identifying content that makes definitive claims about products, policies, compliance, or advice. These audits categorize content by its potential risk exposure (e.g., high-risk financial advice vs. low-risk blog post).

- Clear Ownership and Accountability: Establishing clear roles and responsibilities for content accuracy and currency across the content lifecycle, including who is responsible for updating specific types of evergreen content and when.

- Tiered Review Processes: Implementing differentiated editorial and legal review workflows based on content risk classification. High-stakes content receives rigorous scrutiny, while lower-risk content can proceed with streamlined approvals, ensuring appropriate oversight without creating universal bottlenecks.

- Technological Integration and Version Control: Utilizing content management systems (CMS) with robust version control, metadata tagging (including effective dates, expiration dates, and revision histories), and AI-friendly structuring to help AI systems better interpret content context and currency.

These practices allow organizations to maintain agility in publishing while embedding critical safeguards against misinformation.

Strategic Directives for Content Leadership

For content leaders grappling with these new responsibilities, implementing practical systems that reduce risk without halting publishing is paramount. Three critical steps form a reasonable starting point:

- Conduct a Content Risk Audit: Begin by identifying and inventorying content that makes specific, actionable claims—pricing, capabilities, compliance statements, health advice, financial guidance, etc. Then, test how AI systems (ChatGPT, Perplexity, Google AI Overviews) cite your content by posing relevant queries. Content frequently appearing in AI responses, especially if it’s high-stakes, carries the highest exposure and must be prioritized for accuracy verification and potential updates.

- Establish Clear Ownership and Review Cadences: Assign explicit ownership for the accuracy review of high-risk content segments. Implement a quarterly or semi-annual review cadence for critical evergreen assets. Even for small teams without dedicated compliance support, creating a simple risk classification system and documenting the verification process demonstrates due diligence and builds a foundational layer of accountability.

- Integrate Tiered Legal/Compliance Review: Engage legal and compliance teams early to define a tiered review process. Clarify which content types absolutely require legal sign-off versus those that can be approved solely by editorial teams. Develop templates and pre-approved language for recurring claim types to streamline legal reviews over time. The objective is appropriate oversight, not an impediment to content velocity.

For organizations requiring additional specialized support, services like Contently’s Managing Editors can provide an embedded layer of editorial governance. These experts help teams uphold stringent accuracy standards and navigate complex compliance landscapes without compromising their publishing pace or innovative capacity.

The financial, reputational, and legal costs of rectifying misinformation after it has propagated through AI systems are demonstrably higher than the investment required to manage content proactively. Embracing proactive systems today is not merely a defensive measure but a strategic imperative that builds trust, safeguards brand integrity, and ensures long-term operational resilience in an increasingly AI-driven world. It is a resolution that promises enduring value throughout the year and beyond.

For more insights on building content operations that scale responsibly, explore Contently’s enterprise content solutions.

Frequently Asked Questions (FAQs):

How do I know if my content library has risk exposure to AI misinformation?

Start by systematically auditing all content that makes specific, verifiable claims, such as pricing, product capabilities, compliance statements, health advice, or financial guidance. Once identified, test how frequently and accurately AI systems like ChatGPT, Perplexity, and Google AI Overviews cite your content by posing relevant questions. Content that appears prominently in AI responses, especially if it contains high-stakes information, carries the highest exposure and should be prioritized for immediate accuracy verification and potential updates.

What do I need if I’m on a small content team with no dedicated compliance support?

Even with limited resources, foundational steps can be taken. At a minimum, assign clear ownership for content accuracy reviews, perhaps on a quarterly or semi-annual cadence for critical assets. Develop a simple risk classification system to route high-stakes content through an additional layer of editorial review before publishing. Crucially, document your verification processes and decision-making to demonstrate due diligence if questions about content accuracy arise. These basics do not require additional headcount but rather an intentional and disciplined approach to workflow design.

How do I get legal and compliance teams to participate without slowing everything down?

The key is to integrate tiered review processes from the outset. Collaborate with legal and compliance teams to clearly define which content types necessitate their full sign-off (e.g., contracts, regulated disclosures, health claims) versus what can proceed with editorial approval alone (e.g., general blog posts, lifestyle content). Develop templates and pre-approved language for recurring claim types or standard disclaimers, which can significantly expedite legal reviews over time. The goal is to establish appropriate oversight and risk mitigation, not to create universal bottlenecks that stifle content production.