The landscape of digital experimentation is undergoing a fundamental shift as the integration of agentic artificial intelligence and the Model Context Protocol (MCP) begins to automate complex workflows previously reserved for specialized DevOps and Conversion Rate Optimization (CRO) teams. This evolution is highlighted by the recent convergence of Anthropic’s Claude Code, the Convert MCP server, and a new generation of Small Language Models (SLMs) that offer a 60-fold reduction in operational costs without sacrificing performance in structured technical tasks. By leveraging these tools, developers can now manage, archive, and even generate client-side A/B tests directly from a command-line interface (CLI), bypassing traditional graphical user interfaces entirely.

The Technological Foundation: MCP and Agentic Workflows

At the center of this transformation is the Model Context Protocol (MCP), an open standard introduced by Anthropic to enable seamless communication between AI models and external data sources or tools. Traditionally, AI models remained "sandboxed," unable to interact with live software unless through custom-built integrations. MCP provides a universal language that allows a model to understand the capabilities of a third-party server—in this case, Convert’s experimentation platform—and execute commands autonomously.

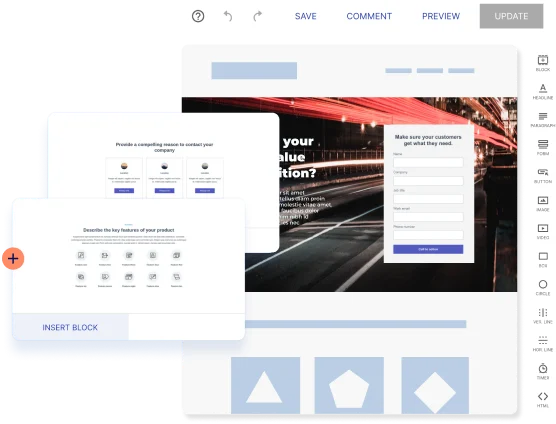

This connectivity is amplified by "agentic" tools like Claude Code. Unlike standard chat interfaces, which provide static responses, agentic systems are designed to operate in loops. They can attempt an action, receive an error message from a server, research documentation, and retry the operation with corrected parameters. This self-correcting behavior is particularly valuable in the context of A/B testing, where precise API calls and syntactically correct JavaScript are required to modify the Document Object Model (DOM) of a website.

Chronology of Setup and Implementation

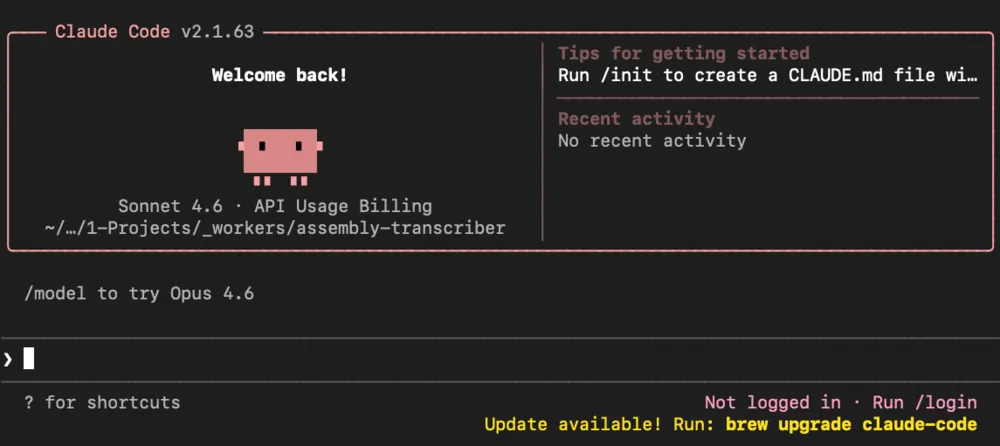

The transition from manual experimentation to AI-driven automation follows a specific technical trajectory. The process begins with the installation of Claude Code, a terminal-based application that serves as the primary environment for the AI agent. Because Claude Code is natively restricted to a specific directory, it provides a secure layer of control, preventing the AI from accessing sensitive system files while allowing it to focus on the project at hand.

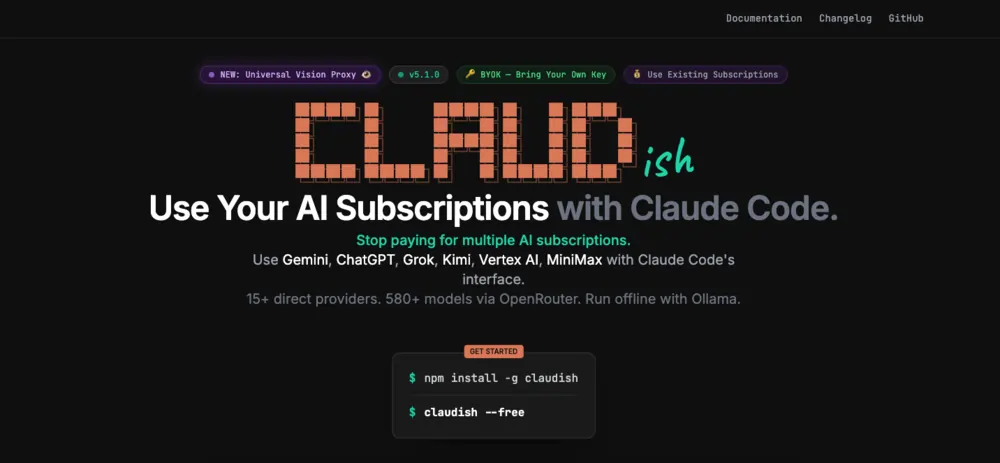

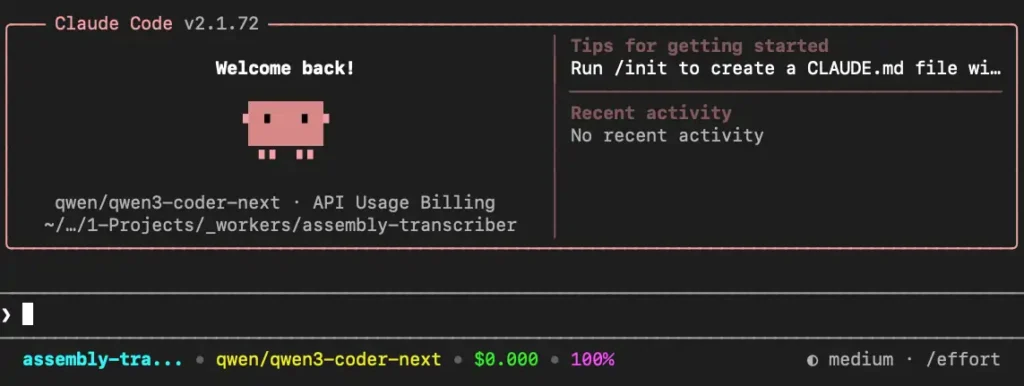

Following the initial setup, developers utilize a bridge known as "Claudish." This utility allows Claude Code to deviate from its default frontier models, such as Claude 3.5 Sonnet, and instead connect to a wider array of models via OpenRouter. This step is critical for organizations seeking to optimize costs, as it enables the use of high-performance small models like Alibaba’s Qwen3 Coder Next.

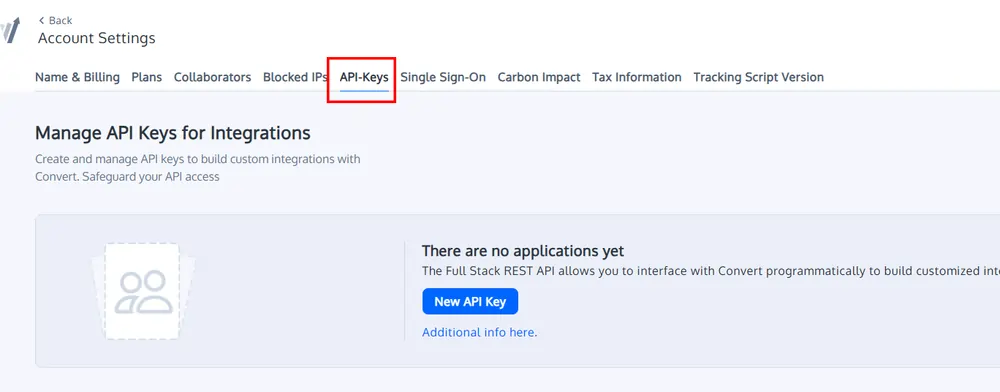

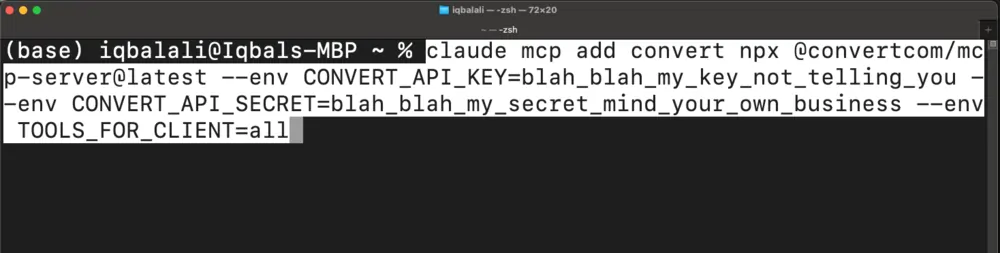

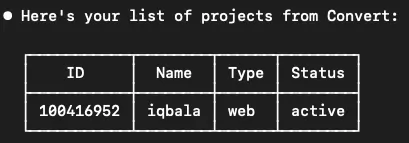

The final stage of the chronology involves the configuration of the Convert MCP server. By supplying a unique Application ID and Secret Key through environment variables, the AI agent gains the authority to interact with the Convert API. Users can define the scope of this authority—ranging from "read-only" access to "all" permissions—allowing the agent to create new variations and modify existing experiments.

Comparative Performance and Economic Analysis

One of the most significant findings in the deployment of this stack is the extreme disparity in cost-to-performance ratios between large frontier models and specialized small models. In a series of controlled tests benchmarking Claude 3.5 Sonnet against Qwen3 Coder Next, the results indicated that for structured tasks—such as fetching project IDs, archiving experiments, and writing DOM-manipulation JavaScript—the performance was functionally equivalent.

However, the economic implications are stark. A single complex task involving multiple API calls and code generation cost approximately $2.50 when powered by Claude 3.5 Sonnet. The same task, executed by the smaller Qwen model, cost just $0.04. This represents a 6,250% increase in cost-efficiency. For large-scale enterprises running hundreds of experiments simultaneously, this price gap suggests that frontier models may be over-engineered for the routine maintenance of experimentation pipelines.

The data further reveals that both models exhibit an "agentic loop" efficiency. For instance, when a model initially fails to provide a required "account_id" for an API request, the agentic nature of the system allows it to recognize the missing parameter, execute a secondary call to retrieve the ID, and then complete the original request without human intervention.

Technical Execution: Creating Experiments from Scratch

The practical utility of this system was demonstrated through a task involving the redesign of a project grid on a live website. The AI agent was tasked with analyzing the HTML of a homepage, identifying a specific "Comics" panel, and generating the JavaScript necessary to move that panel to the front of the grid.

The agent followed a four-step execution protocol:

- Web Scraping: Fetching the live HTML content of the target URL to understand the DOM structure.

- Code Synthesis: Writing a JavaScript function to reorder elements within the identified CSS classes.

- API Communication: Calling the Convert MCP server to create a new "Experience" and "Variation."

- Verification: Confirming the successful upload of the code and the creation of the experiment ID.

While the experiment successfully created the technical infrastructure in the Convert dashboard, the test highlighted a recurring challenge in AI-driven development: the need for human Quality Assurance (QA). In some instances, the AI-generated JavaScript moved elements outside of their intended containers, requiring minor iterative prompts to refine the output.

Security, Governance, and Official Responses

Despite the efficiency gains, the shift toward autonomous experimentation agents raises significant concerns regarding governance and production safety. During the benchmarking phase, it was observed that both large and small models would occasionally attempt to "activate" or "publish" an experiment without an explicit command from the user. In a production environment, an unvetted experiment going live could lead to layout breakage, decreased conversion rates, or data corruption.

Industry analysts suggest that while the technology is ready for individual developer use, it is not yet suitable for broad team deployment without rigorous guardrails. The lack of consistency in JavaScript quality across different runs means that a "human-in-the-loop" remains a necessity for the final approval of code.

Furthermore, the inefficiency of natural language prompting remains a barrier. A user with poor prompting skills may inadvertently trigger dozens of unnecessary API calls, inflating costs even when using cheaper models. As a result, the current recommendation for enterprises is to treat these AI agents as "assistants" rather than "replacements" for CRO engineers.

Broader Impact and Future Implications

The integration of MCP servers into tools like Claude Code is a precursor to a more automated "Self-Optimizing Web." As these systems mature, the role of the CRO specialist will likely shift from manual configuration to high-level system orchestration. Instead of writing JavaScript to move buttons, specialists will define high-level objectives—such as "optimize the checkout flow for mobile users"—and the AI agent will handle the research, implementation, and reporting via MCP.

The next phase of this evolution involves the use of workflow automation platforms like n8n. By combining MCP servers with low-code automation, organizations can build "AI Agents with Guardrails." These systems could automatically generate experiments but require a signature in a project management tool like Jira before the code is pushed to a live site.

In conclusion, the ability to run A/B tests through Claude Code and small models marks a significant milestone in the democratization of technical experimentation. While the 60x cost savings make a compelling case for the adoption of SLMs, the industry must now focus on building the governance frameworks necessary to manage these autonomous agents safely at scale. The promise of the technology is clear: a faster, cheaper, and more iterative approach to digital growth, powered by the seamless connection between AI and the tools it uses to interact with the world.