The digital marketing landscape has reached a critical juncture where the traditional reliance on simple A/B testing is increasingly viewed by industry experts as a symptom of low organizational maturity rather than a hallmark of data-driven success. While A/B testing has long been championed as the gold standard for reducing guesswork in product development and marketing, a growing body of evidence suggests that many enterprises have fallen into a "testing trap," where minor UI tweaks are prioritized over substantive strategic improvements. This shift in perspective comes at a time when e-commerce competition is at an all-time high, and the cost of customer acquisition demands more sophisticated methods of conversion rate optimization (CRO) than the binary choice between two button colors.

The Historical Context of the Experimentation Default

The rise of A/B testing as the default methodology for digital growth can be traced back to the early 2010s, coinciding with the democratization of experimentation platforms. Tools such as Optimizely, VWO, and later FigPii, simplified the technical barriers to entry, allowing marketing teams to launch experiments without deep expertise in statistics or backend engineering. This "low-code" revolution shaped a corporate culture where "experimentation" became synonymous with "running a split test."

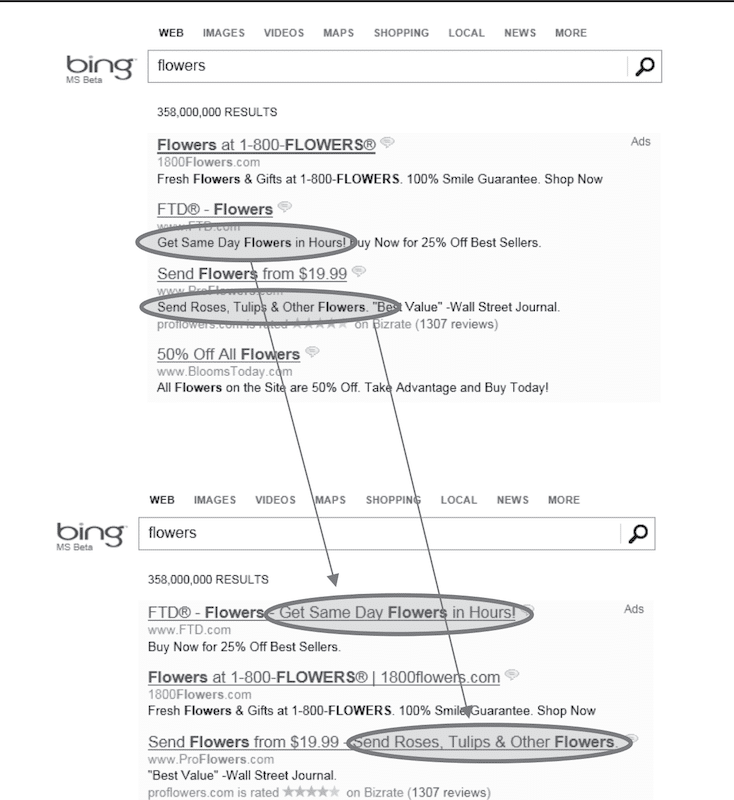

The normalization of this mindset was further solidified by high-profile success stories from Silicon Valley. Perhaps the most cited example is Microsoft’s Bing team, which famously discovered that merging two ad title lines into a single longer headline increased click-through rates significantly enough to generate more than $100 million in additional annual revenue. Such outsized returns from seemingly minor adjustments led thousands of companies to adopt a "test everything" philosophy. Today, Microsoft reportedly conducts over 20,000 controlled experiments annually across the Bing ecosystem alone. However, for the average enterprise lacking Microsoft’s astronomical traffic, this volume-heavy approach has led to diminishing returns and a misunderstanding of statistical significance.

Statistical Power and the Reality of Modern Traffic

A primary challenge facing the modern CRO industry is the lack of statistical power required to make A/B testing viable for most businesses. According to industry surveys, approximately 77% of all digital experiments are simple A/B tests involving only two variants. While this simplicity is attractive, it often fails to account for the volume of data needed to detect meaningful change.

To detect a modest lift of 1% to 2% with statistical confidence, a website often requires hundreds of thousands of visitors per variant. For many e-commerce brands—even those generating one to two million sessions per month—achieving this level of granularity is mathematically impossible within a reasonable timeframe. When teams ignore these requirements, they often fall into three common failure modes:

- P-Hacking or "Peeking": Stopping a test as soon as it shows a positive result, which often disappears once the sample size is actually reached.

- False Positives: Implementing changes based on "noise" rather than actual behavioral shifts.

- Slow Innovation Cycles: Running tests for 8 to 12 weeks to reach significance, effectively paralyzing the product development roadmap.

The "What" vs. The "Why": Analyzing the Mechanism of Failure

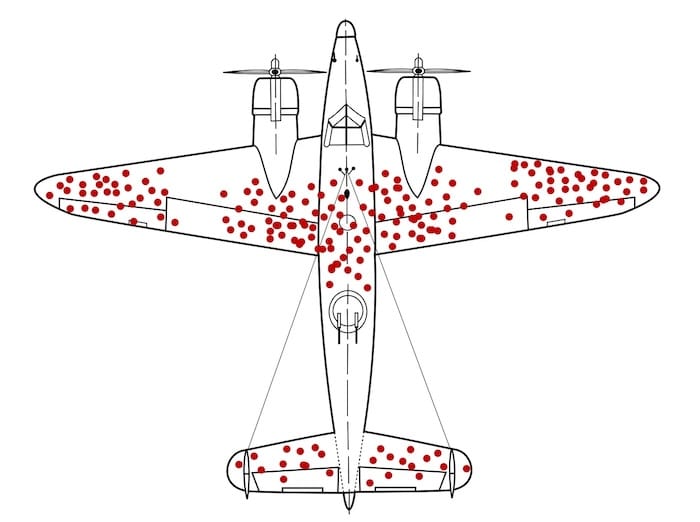

A fundamental limitation of A/B testing is its inability to explain the causality behind user behavior. It provides a definitive answer on what happened—Variant B outperformed Variant A—but remains silent on why the shift occurred. This creates a psychological blind spot similar to the concept of survivorship bias.

During World War II, the statistician Abraham Wald famously analyzed bullet holes in returning aircraft to determine where to add armor. While the military initially wanted to reinforce the areas with the most holes, Wald realized that the most critical damage occurred in the areas where the returning planes had no holes—because the planes hit in those areas (the engines) never returned.

In the context of CRO, A/B tests only provide data on the "survivors"—the users who interacted with the site and moved through the funnel. They offer no insight into the users who abandoned the site entirely due to slow load times, confusing navigation, or a lack of trust. Without qualitative research to supplement the quantitative data, teams often find themselves "polishing the bullet holes"—optimizing minor elements for the users they already have, rather than fixing the fundamental vulnerabilities that drive potential customers away.

The Impact of Short-Term Optimization on Long-Term Health

One of the most significant risks of over-relying on A/B testing is the misalignment between short-term uplifts and long-term business health. Most tests are optimized for immediate actions: clicks, add-to-carts, or same-session purchases. However, these metrics can be deceptive.

Research into the "Paradox of Choice," specifically the famous Jam Experiment conducted by researchers at Columbia and Stanford Universities, illustrates this risk. In the study, a display with 24 varieties of jam attracted more interest than a display with only six, but the smaller selection led to a 30% purchase rate, compared to just 3% for the larger selection. An A/B test focused on "engagement" or "clicks" would have declared the 24-variety display the winner, despite the fact that it decimated actual sales.

Similarly, e-commerce teams often implement "dark patterns" or high-pressure tactics that increase immediate conversions but damage Customer Lifetime Value (CLV). A variant that increases sales today through misleading countdown timers may result in a surge of returns, negative reviews, and a permanent loss of brand loyalty tomorrow. High-maturity teams have begun to move away from these "vanity wins" in favor of metrics that track long-term profitability and repeat purchase behavior.

Strategies of High-Maturity Experimentation Teams

To overcome the limitations of basic split testing, leading organizations are adopting a broader experimentation toolkit and more rigorous research methodologies. Industry experts, including Kohavi, Tang, and Xu in their seminal work Trustworthy Online Controlled Experiments, suggest that mature organizations should utilize a variety of experimental designs:

- Sequential Testing: Allowing for earlier decision-making by adjusting for the multiple comparisons problem, which helps teams move faster without sacrificing statistical integrity.

- Holdout Groups: Keeping a small percentage of the audience in an "original" experience for months at a time to measure the long-term cumulative impact of all implemented changes.

- Quasi-Experiments and Switchback Tests: Used in complex environments where users interact (like marketplaces or delivery apps) to avoid "interference" between test variants.

Furthermore, high-maturity teams prioritize hypothesis quality over test quantity. Rather than pulling ideas from a random backlog, they ground their experiments in multi-source research, including:

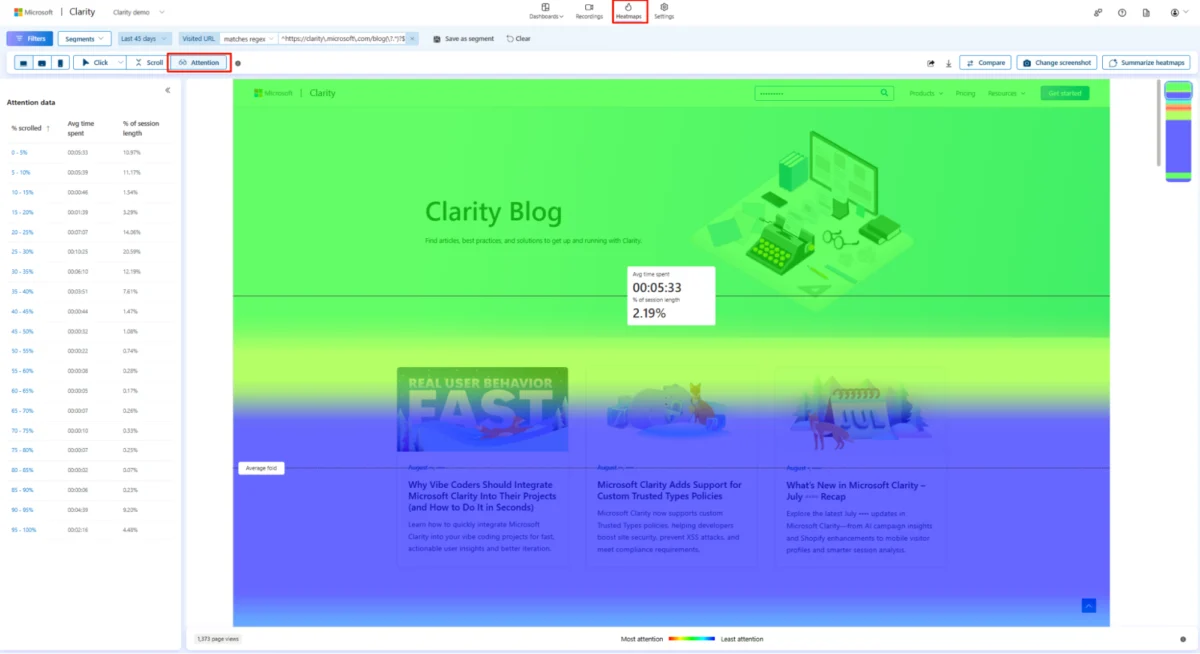

- Session Recordings: To identify friction points in the user journey.

- Support Tickets: To understand the specific questions and anxieties of the customer base.

- Heatmaps: To see which elements are being ignored by the majority of users.

- Post-Purchase Surveys: To capture the "voice of the customer" at the moment of highest intent.

Moving Toward Strategic Levers

The transition from a low-maturity to a high-maturity CRO program requires a shift from testing "micro-tweaks" to testing "strategic levers." While button colors and CTA copy are easy to ship, they rarely move the needle on revenue in a sustainable way.

Strategic experimentation focuses on the core drivers of the buying decision: pricing models, value propositions, information hierarchy, and product mix. For example, instead of testing the color of a "Buy Now" button, a high-maturity team might test whether offering "Carbon Neutral Shipping" as a default option increases conversion among Gen Z demographics, or whether a subscription-based pricing model improves long-term profit margins compared to one-time purchases.

Conclusion and Broader Implications

The professionalization of the CRO industry suggests that the era of "random acts of testing" is coming to an end. As data privacy regulations like GDPR and CCPA make tracking more difficult, and as consumer skepticism of digital marketing grows, the companies that succeed will be those that view experimentation as a holistic learning process rather than a series of binary choices.

The broader implication for the tech industry is a move toward "Evidence-Based Management." In this model, A/B testing is just one of many tools used to validate a business strategy. By combining quantitative data with qualitative insights and focusing on long-term business outcomes, organizations can build experiences that do more than just "win" a test—they build sustainable growth and genuine customer value. For many, the first step in this journey is acknowledging that while A/B testing is a powerful tool, it is not a substitute for a deep understanding of human behavior and sound business logic.