This is not a futuristic concept; it is the emergence of the agentic web, a transformative paradigm shift where artificial intelligence agents can navigate, interpret, and perform tasks autonomously on websites, fundamentally altering how digital information is accessed and utilized. Unlike conventional web experiences, where people click, scroll, and search, AI agents are increasingly capable of understanding search intent, comparing options, and executing actions on behalf of users, effectively turning websites from mere pages to be visited into sophisticated endpoints to be queried. This architectural shift, already underway, signals a profound evolution from a web designed exclusively for human users to one optimized for seamless interaction between both people and intelligent AI assistants.

The Dawn of the Agentic Web: A Paradigm Shift

For decades, the internet has largely operated on a simple, human-centric model. Users would manually search, click through results, compare information, and complete tasks. Even with the advent of sophisticated search engines, the core interaction model remained "search and click." However, this traditional model is now undergoing a radical transformation. The agentic web represents a monumental leap, moving beyond static content consumption to dynamic, autonomous interaction. Users are beginning to delegate complex tasks – such as researching products, comparing services, filling out forms, and executing transactions – to intelligent assistants. In this evolving landscape, the user’s role transitions from an active navigator to a high-level decision-maker, shifting the emphasis from active searching to strategic delegation.

This evolution extends far beyond smarter chat interfaces. It encompasses autonomous agents capable of interpreting nuanced search intent, evaluating multiple options, and executing complex actions. Websites are no longer just visual interfaces; they are becoming programmable endpoints designed for machine-to-machine communication. For this vision to scale effectively, intelligence cannot be confined to a single assistant or a closed platform. It necessitates a distributed intelligence model where systems can communicate effortlessly and frictionlessly with other systems. This mandates a web that is inherently machine-readable, robustly interoperable, and explicitly engineered for sophisticated agent-to-agent interaction. The agentic web is not a distant prediction; it is a tangible architectural shift that is rapidly redefining the digital landscape.

Microsoft’s Vision: Introducing NLWeb and the Model Context Protocol (MCP)

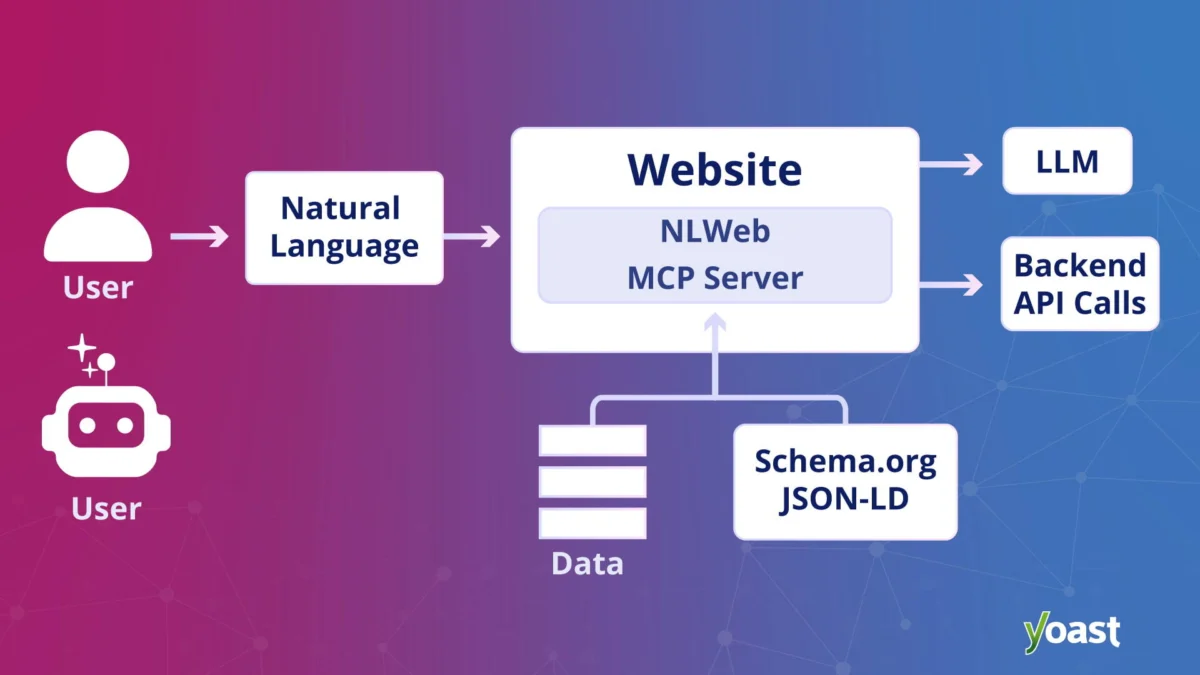

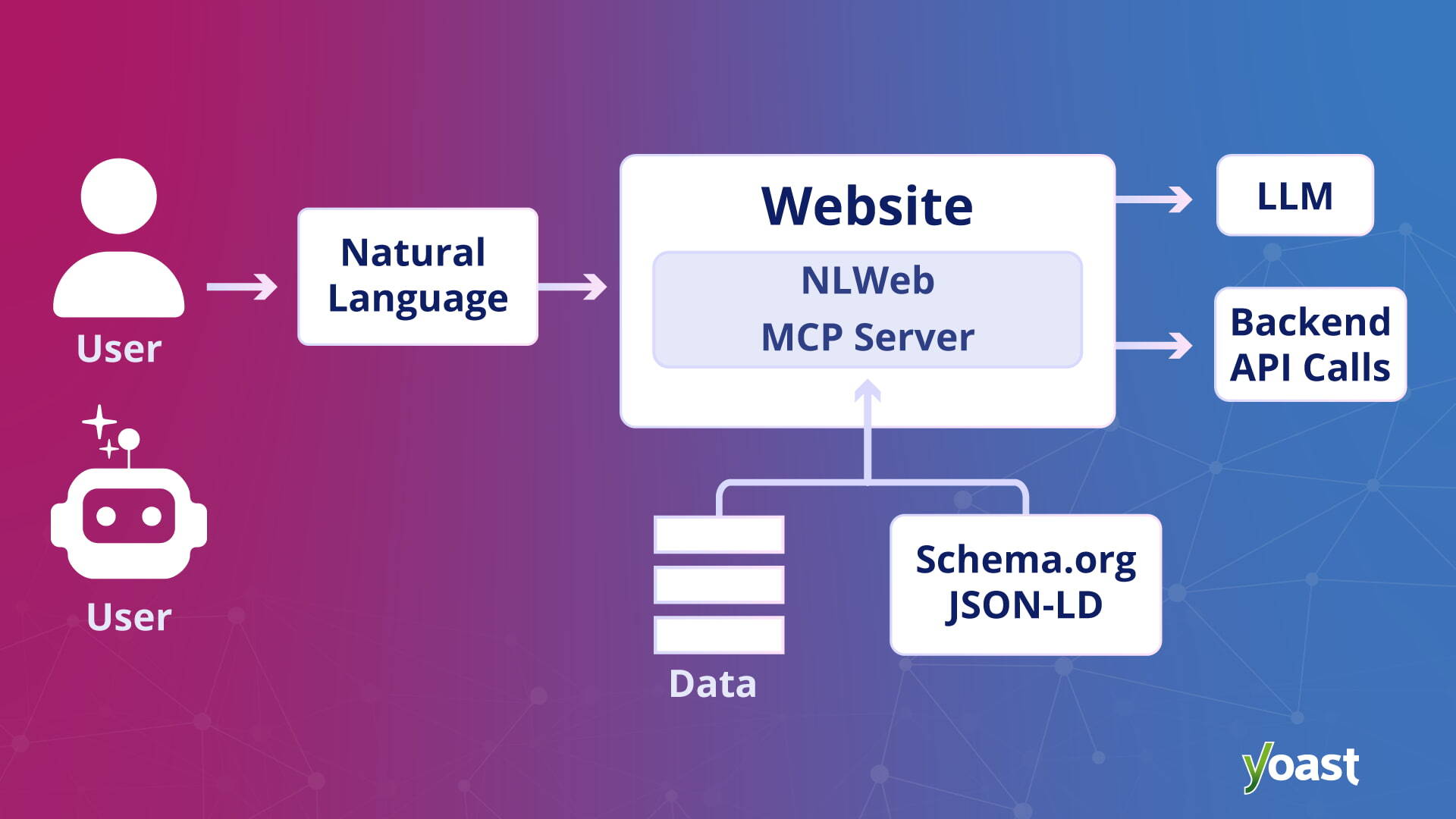

A pivotal development in the emergence of the agentic web is Microsoft’s NLWeb initiative. First introduced in May 2025 as an open project, NLWeb aims to empower websites to offer rich natural language interfaces using their own data and preferred AI models. The initiative gained significant traction and a broader spotlight in November 2025 at Microsoft Ignite, the company’s premier annual conference for developers and IT professionals. During this event, Microsoft not only reiterated the core principles of NLWeb but also unveiled its first enterprise offering through Microsoft Foundry, signaling a clear strategic commitment to this new frontier of web interaction.

At its core, NLWeb is designed to enable any website to function much like an AI application. Instead of requiring manual page navigation, users and AI agents can query a site’s content directly using natural language. This capability vastly simplifies information retrieval and task execution. Crucially, NLWeb is more than just a conversational layer; every NLWeb instance also functions as a Model Context Protocol (MCP) server. This technical integration means that when a website enables NLWeb, it automatically becomes inherently discoverable and accessible to agents operating within the MCP ecosystem. This standardization eliminates the need for agents to have custom integrations for every single website they encounter. If a website supports NLWeb, agents can seamlessly recognize it and interact with its content in a standardized, efficient manner.

NLWeb leverages existing web formats that are already widely adopted, such as Schema.org for structured data and RSS for content distribution. By combining this structured data with the power of large language models (LLMs), NLWeb generates accurate and contextually relevant natural language responses. This approach allows websites to expose their content in a format that is readily understandable by both human users and sophisticated AI agents. Importantly, NLWeb is designed to be technology-agnostic, granting site owners the flexibility to choose their preferred infrastructure, AI models, and databases. The overarching goal is to foster broad interoperability rather than create platform lock-in, echoing the open standards that defined the early web. In essence, NLWeb is strategically positioned to play a foundational role in the agentic web, akin to HTML’s role in the original internet, by providing a shared communication layer that facilitates direct agent-to-website queries without exclusive reliance on traditional crawling or visual interfaces.

How NLWeb Differs from Standard LLM Citations

A critical distinction of NLWeb lies in its approach to information retrieval compared to standard LLM citations. In typical LLM interactions, the model first generates an answer based on its vast training data and then retrospectively adds sources to support its probabilistic response. This methodology, while powerful, can introduce potential inaccuracies or "hallucinations" due to the generative nature of the model.

NLWeb operates on a fundamentally different principle. It treats the language model not as an inventor of answers but as an intelligent retrieval layer. Instead of generating speculative responses, NLWeb is engineered to pull verified objects and information directly from the website’s structured data. This data is then presented in natural language. This distinction is paramount: responses generated through NLWeb are inherently grounded in the publisher’s own authoritative data from the outset. This significantly reduces the risk of hallucination and grants website owners a far greater degree of control over how their content is represented and interpreted by AI agents. This data-first, retrieval-based approach enhances accuracy, trustworthiness, and the overall utility of agentic interactions.

The Evolution of Web Interaction: From Clicks to Queries

The shift from a web for users to a web for users and agents represents a profound evolution in how we conceive of and interact with online information. For years, web design and development prioritized visual presentation, intuitive navigation for human eyes, and the "click-through" model. Success was often measured by page views, time on site, and conversion rates driven by human interaction.

The agentic web, however, introduces a new metric: queryability. Instead of manually navigating a labyrinth of pages, users will increasingly delegate tasks to AI assistants that can intelligently search, interpret information, and act on their behalf. This means a user might simply ask their AI assistant, "Find me the best mortgage rate for a 30-year fixed loan from reputable banks, considering my credit score and income," and the agent would autonomously query various financial institution websites, compare offers based on predefined criteria, and present a synthesized, actionable summary, perhaps even initiating the application process.

This transition implies a shift in user experience from direct engagement with a website’s interface to indirect delegation through an intermediary agent. The user’s time is freed from the minutiae of browsing, allowing them to focus on decision-making based on aggregated, pre-processed information. This also implies a new competitive landscape for businesses, where visibility will depend not just on traditional search rankings but on the ability of their digital assets to be accurately and efficiently queried by AI systems. The web, in this new era, transforms from a collection of visual documents into a distributed, interactive database accessible through natural language.

Foundational Infrastructure: The Imperative of Protocol Thinking

The realization of the agentic web hinges on a critical, often overlooked, aspect: infrastructure and standardization. If intelligent systems are to interact seamlessly with millions of websites, the fundamental question becomes, "How do these systems understand each other?" The answer lies not in complex visual design but in robust, standardized infrastructure – what is termed "protocol thinking."

The internet’s success has always been predicated on shared communication rules. HTTP enables browsers to request and receive web pages. RSS facilitates the distribution of content updates. Structured data, like Schema.org, helps search engines interpret the meaning and context of information. These are not merely features; they are foundational protocols – agreements that enable large-scale coordination and interoperability across a vast, decentralized network.

The same logic now applies to AI agents. In the agentic web, agents will not mimic human browsing by clicking buttons or visually scanning pages. Instead, they will send programmatic requests, interpret structured responses, compare options, and complete tasks. For this intricate dance to occur reliably across an immense ecosystem of websites, communication cannot be improvised or site-specific. It must be standardized.

Protocol thinking, therefore, becomes essential for web developers and content creators. It means designing websites with machine predictability in mind. Rather than building custom integrations for every conceivable AI assistant or platform, websites will expose a consistent, machine-readable interaction layer. Agents won’t need to "learn" every unique interface; they will rely on universally understood rules and protocols. As emphasized in discussions around distributed intelligence, the goal is not a monolithic chatbot controlling everything, but a decentralized network where intelligence is distributed. Systems require a simplified, standardized method to communicate without needing to comprehend the intricate technical details of every tool they interact with. This shared language and common ground are precisely what protocols provide. This involves:

- Standardized Data Formats: Adopting universal schemas (like Schema.org) that define the types of entities and relationships on a website, making data easily parseable by machines.

- API-First Design Principles: Building websites with programmatic access in mind, exposing data and functionalities through well-documented APIs.

- Machine-Readable Content: Ensuring that the semantic meaning of content is clear and unambiguous, moving beyond visual presentation to structured data representation.

- Authentication and Authorization Protocols: Developing secure, standardized ways for agents to verify identities and obtain necessary permissions to perform actions on behalf of users.

- Interoperable Communication Layers: Creating universal communication protocols that allow agents to exchange requests and responses consistently, regardless of the underlying platform or technology.

These protocols collectively create the shared language that allows AI agents to efficiently and accurately understand, interact with, and act upon the vast repository of information and services available on the agentic web.

Implications for SEO Professionals: Redefining Visibility

As the web evolves to accommodate AI agents, SEO professionals face a fundamental new challenge: "How do you maintain visibility and influence when answers are generated by AI rather than ranked and clicked?" This question gained significant clarity during Microsoft’s Ignite event, where a consultant highlighted a client selling products like mayonnaise. The client’s concern was straightforward: how to ensure their brand appears when an AI assistant is asked a question about mayonnaise. This simple query unveiled a deeper truth: if AI systems synthesize answers directly instead of presenting a list of search results, the very definition of "optimization" must change.

This shift is profound. The agentic web does not replace the open web; rather, it adds a sophisticated new layer on top of it. Traditional search engines will continue to index pages, and conventional rankings will still hold importance. However, intelligent systems now possess the capability to query websites directly, cross-reference information from multiple sources, and generate highly synthesized, contextually rich responses.

For SEO professionals, this fundamentally alters the role and perception of a website. It is no longer sufficient to think solely in terms of pages designed for human visitors. Websites must increasingly be treated as robust endpoints engineered for efficient querying by machines. This means that elements traditionally considered enhancements for rich results – such as meticulously implemented structured data, a clean and logical information architecture, and inherently machine-readable content – are no longer merely advantageous. They become the foundational prerequisites that enable AI systems to interpret, understand, and ultimately select a brand’s content in the first place. Visibility in this new agentic layer will be contingent upon clarity, interoperability, and a solid technical infrastructure. The imperative for SEOs is to ensure their websites are not just discoverable by human users, but also structured, accessible, and ready to be intelligently queried by autonomous systems.

Yoast’s Strategic Partnership: Empowering WordPress in the Agentic Era

In a significant announcement alongside NLWeb’s presentation, Microsoft highlighted Yoast as a key partner in extending agentic search capabilities to the vast WordPress ecosystem. This collaboration, detailed in an official press announcement regarding "Yoast and Microsoft’s NLWeb integration," underscores the practical steps being taken to bridge the gap between emerging AI technologies and widely used content management systems.

For the millions of WordPress site owners worldwide, concepts like infrastructure, endpoints, and communication protocols can often seem abstract and daunting. This is precisely where Yoast’s preparatory work becomes invaluable. While Yoast does not automatically deploy NLWeb for its users, its advanced schema aggregation feature – available in Yoast SEO, Yoast SEO Premium, Yoast WooCommerce SEO, and Yoast SEO AI+ – plays a crucial foundational role. This feature meticulously organizes and structures content on a WordPress site, creating a machine-readable data layer. Visually, nothing changes on the front end of the website for human visitors. However, beneath the surface, the underlying data structure is transformed, making it significantly easier to build and integrate NLWeb functionalities on top of it.

In essence, Yoast is mapping and organizing structured data to drastically reduce the technical effort and complexity required for publishers to implement NLWeb. By leveraging Yoast’s schema capabilities, WordPress users are completing much of the essential groundwork necessary for their content to be understood and queried by AI agents. This strategic partnership ensures that the agentic web is not an exclusive domain for technically sophisticated enterprises but an accessible future for a broad spectrum of online publishers. It emphasizes that the agentic web is not merely about chasing a fleeting trend; it is about securing the continued discoverability, understandability, and usability of content in a future where intelligent systems increasingly act as proxies for human users.

Broader Industry Reactions and Future Outlook

The unveiling of the agentic web concept and initiatives like NLWeb has elicited a range of reactions across the tech industry and the broader web development community. Many see it as an inevitable and exciting evolution, promising unprecedented levels of efficiency and personalization for users. Industry analysts anticipate a surge in demand for web developers proficient in structured data, API design, and protocol-based communication. Early adopters and innovators are keen to experiment with these new capabilities to gain a competitive edge. The promise of reduced operational costs for businesses and more streamlined user experiences is a powerful motivator.

However, the transition also presents significant challenges and raises important considerations:

- Security and Data Privacy: As agents gain more autonomy and access to sensitive user data, robust security protocols and stringent data privacy measures will be paramount. Ensuring that agents act only within authorized boundaries and that data is protected from misuse will be a continuous challenge.

- Ethical AI and Bias: The intelligence underpinning agentic interactions must be developed and deployed ethically. Issues of algorithmic bias, fairness, and accountability will need to be addressed rigorously to prevent agents from perpetuating or amplifying societal biases.

- Content Monetization: The shift from direct website visits to AI-generated answers raises questions about traditional advertising models and how content creators will be compensated for their valuable information. New monetization strategies may need to emerge to support the open web in an agentic future.

- Digital Divide: Ensuring equitable access to the benefits of the agentic web and preventing a widening of the digital divide will require thoughtful policy and design.

- Web Governance: The decentralized nature of the agentic web will necessitate ongoing collaboration and agreement on universal standards and best practices for interoperability and ethical agent behavior.

Despite these challenges, the long-term vision for the agentic web is one of a more intelligent, responsive, and user-centric internet. It envisions a world where information is not just found but actively utilized to complete tasks, where online interactions are seamless, and where the digital environment proactively anticipates and serves user needs. The collaboration between tech giants like Microsoft and ecosystem enablers like Yoast signifies a concerted effort to lay the groundwork for this future, ensuring that the web remains an open, accessible, and dynamic platform for innovation.

In conclusion, the agentic web represents a fundamental architectural shift in the internet’s evolution. It moves beyond human-centric browsing to embrace autonomous AI agent interaction, transforming websites into intelligent, queryable endpoints. Initiatives like Microsoft’s NLWeb, with its Model Context Protocol, are providing the essential infrastructure and standardized communication layers necessary for this transformation. For web publishers and SEO professionals, this means a renewed focus on structured data, machine readability, and interoperability. The future of online visibility and user engagement will increasingly depend on a website’s ability to communicate effectively with both humans and intelligent machines, marking a new era of distributed intelligence and seamless digital interaction.