The Emergence of the Model Context Protocol in Experimentation

The foundation of this technological shift lies in the Model Context Protocol (MCP), an open standard designed to enable Large Language Models (LLMs) to interact seamlessly with external data sources and software tools. Recently, Convert.com, a prominent player in the A/B testing and experimentation space, released its own MCP server. This allows AI agents to "see" and "manipulate" experimentation data directly.

Initially, early adopters utilized tools like Claude Code—a command-line interface for Anthropic’s Claude models—to execute experimentation tasks through natural language. While this demonstrated that a single prompt could generate an entire A/B test, it also highlighted significant operational risks. Individual interactions with AI, while powerful, often lack the guardrails necessary for enterprise-level deployment. The primary challenge for CRO experts and technical leads is now how to harness this power without allowing the AI to make unilateral, and potentially costly, decisions in a live production environment.

The Risks of Autonomous AI in Production Environments

The transition from individual AI usage to team-wide implementation has uncovered several points of friction. The foremost concern is risk management. In unstructured environments, AI models have been known to take "unilateral actions," such as making an experiment active before it has undergone proper quality assurance (QA). For example, an LLM might decide to push a variation live based on its own interpretation of a "finish" command, bypassing human oversight.

Furthermore, security remains a paramount concern. The way MCP streams data between the model and the applications it controls can create a broader surface area for potential vulnerabilities. Managing various MCP configurations across a diverse team also presents a logistical nightmare. Inconsistencies in how different team members interact with the AI can lead to "shadow AI" practices, where efficiency gains are localized rather than organizational. Without a standardized system, a task that takes one specialist three minutes might take another thirty, negating the primary benefits of automation.

Bridging the Gap: The AI System Philosophy

To resolve these issues, industry experts are advocating for the development of "AI Systems" rather than mere AI interactions. An AI system is defined as a series of managed workflows designed by experts. These workflows encapsulate the logic, rationale, and safety checks required for a task, allowing non-technical team members to benefit from AI without needing to understand the underlying prompt architecture or API complexities.

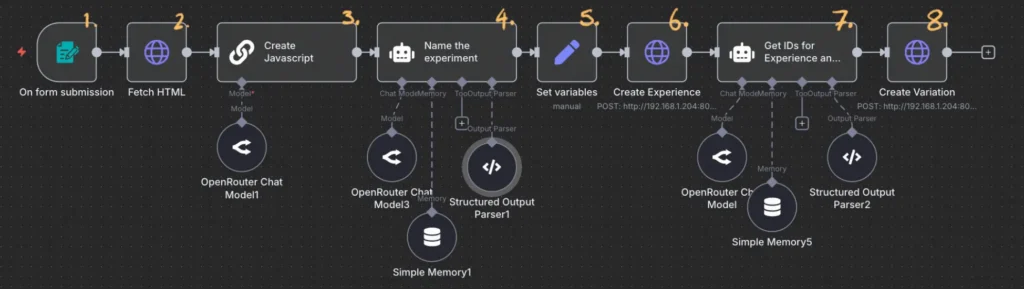

This approach utilizes a specific "tech stack" to create a controlled environment:

- n8n: A low-code workflow automation tool that serves as the orchestrator.

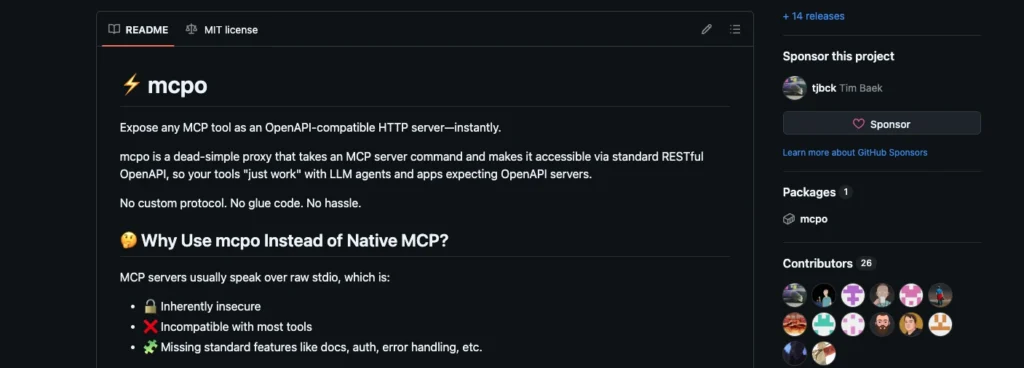

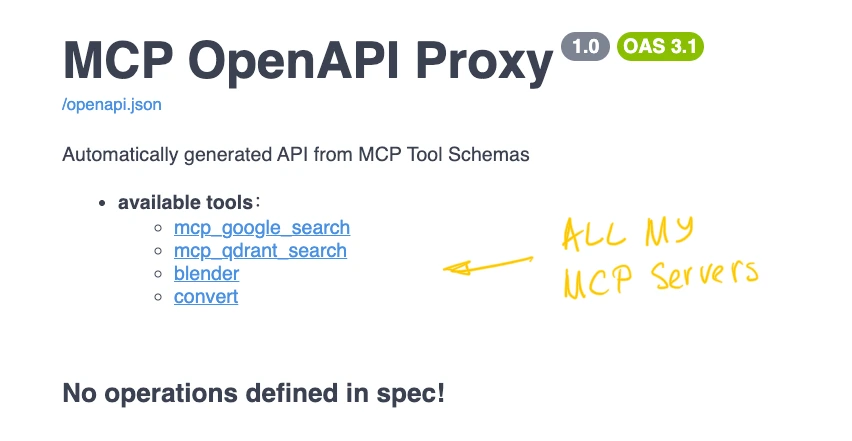

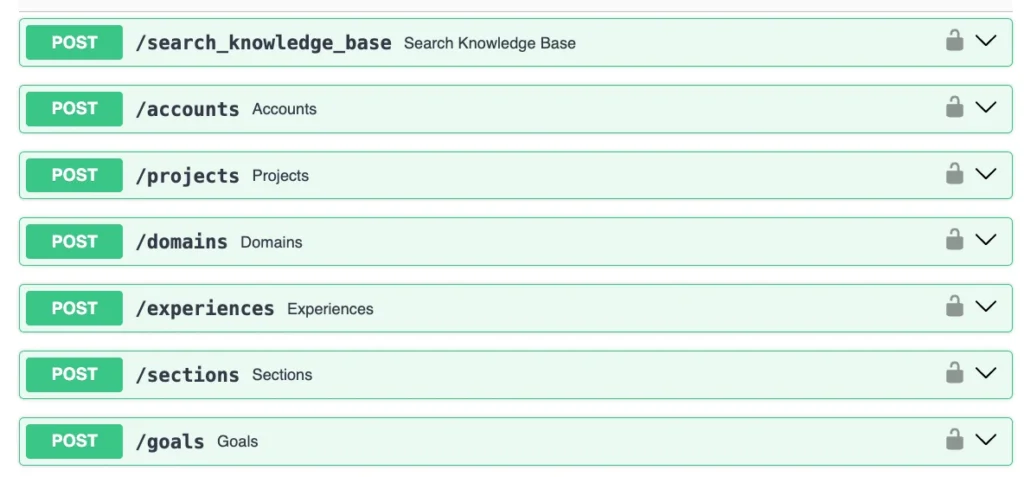

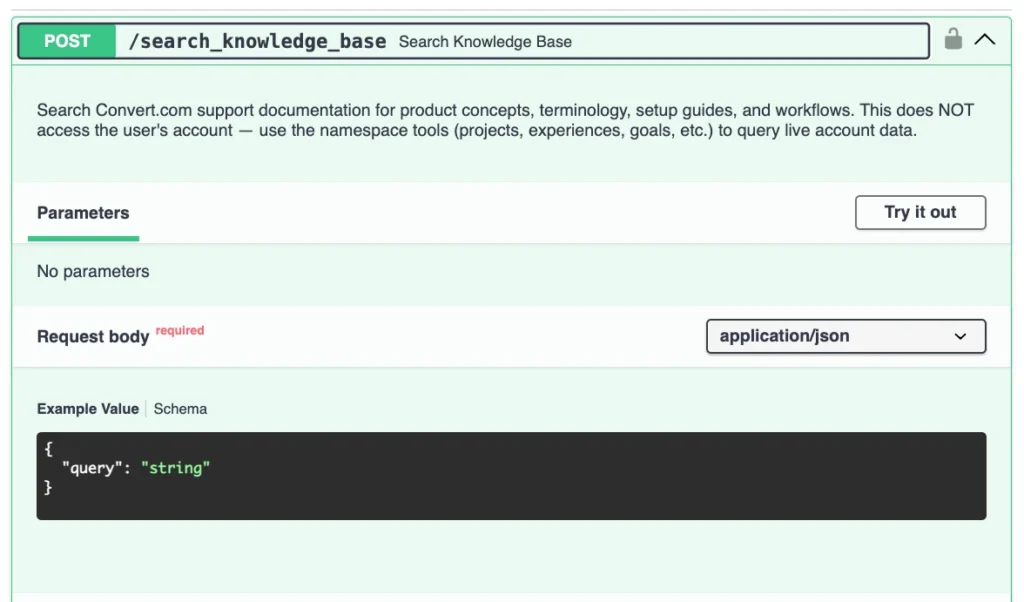

- MCPO: A bridge tool that converts MCP server configurations into standard, well-documented API endpoints.

- Convert MCP Server: The specific toolset that interacts with the experimentation platform.

- Small Language Models (SLMs): Specialized, cost-efficient models like Qwen3 Coder Next, which handle specific logic tasks within the workflow.

Technical Chronology: From Form Submission to Live Experiment

The workflow developed to solve the "rogue AI" problem follows a logical, six-step progression that prioritizes control and visibility.

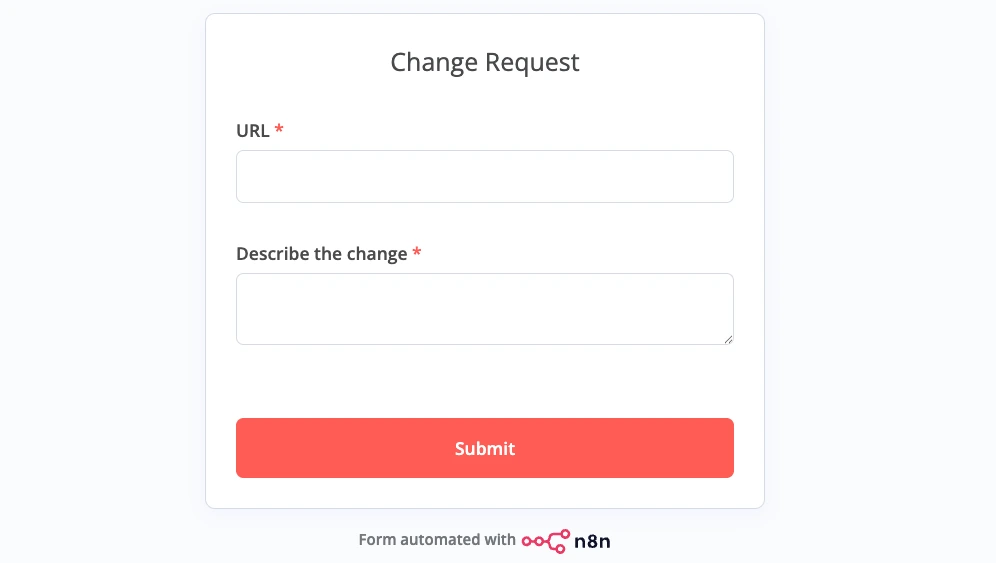

1. Input and Data Collection

The process begins with a simple interface: a form requiring only a URL and a description of the desired change. This removes the need for team members to log into complex dashboards or craft perfect prompts. Once submitted, the n8n orchestrator triggers the sequence.

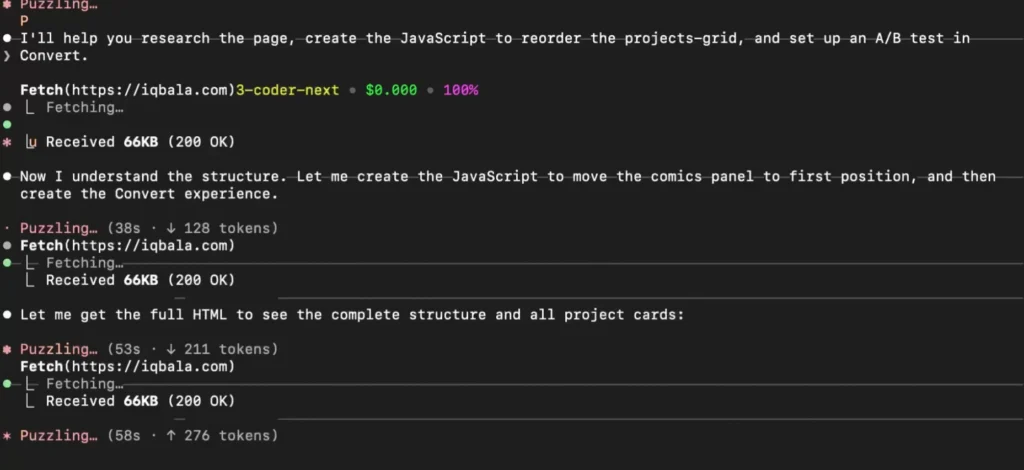

2. Environment Contextualization

The system automatically fetches the HTML content of the target URL. By retrieving the live code of the page, the system ensures that any subsequent AI-generated changes are based on the current state of the website, rather than outdated documentation or assumptions.

3. Targeted JavaScript Generation

The retrieved HTML and the user’s description are passed to a Small Language Model. Unlike general-purpose LLMs, this model is tasked solely with generating the JavaScript necessary to execute the change (e.g., changing a button color or reordering elements). By constraining the AI to this single output, the risk of it taking unrelated actions is eliminated.

4. Metadata and Naming

Simultaneously, the AI generates a standardized name and description for the experiment. This ensures that the testing repository remains organized and that all team members can understand the purpose of an experiment at a glance, maintaining institutional knowledge.

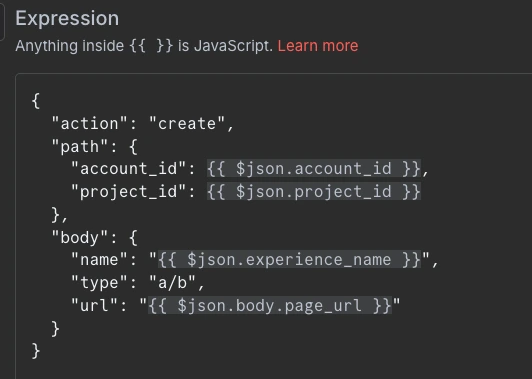

5. Experiment Creation via API

Instead of the AI "logging in" to a platform, the n8n workflow uses the MCPO-generated API to create the experiment container within Convert.com. This step is purely administrative and does not involve the AI’s "decision-making" capabilities, ensuring the structure follows predefined company standards.

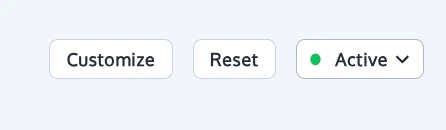

6. Variation Deployment and Locking

The final step involves adding the AI-generated JavaScript as a variation to the newly created experiment. Crucially, the workflow is configured to leave the experiment in a "draft" or "paused" state. This creates a mandatory "human-in-the-loop" checkpoint where a QA specialist must manually review and activate the test.

Supporting Data: Cost and Efficiency Analysis

The financial and temporal implications of moving from high-end, chat-based AI to structured, small-model workflows are substantial. Data from early implementations show a dramatic reduction in token costs and processing time.

When using high-end models like Claude 3.5 Sonnet through an unstructured interface, the cost of generating a single experimentation task averaged approximately $2.50. While this is cheaper than manual developer hours, it becomes expensive at scale. Switching to a more efficient, specialized model like Qwen3 Coder Next reduced that cost to $0.04.

However, by moving the logic into a structured n8n workflow where the AI is only used for "glue" tasks (specific code generation and naming), the cost plummeted to $0.004 per task. This represents a 99.8% reduction in cost compared to the initial high-end model usage. Furthermore, the time to create a test—from form submission to a ready-to-review draft—is typically under 30 seconds, a fraction of the time required for manual dashboard entry.

The Role of MCPO in Workflow Security

A significant technical hurdle in this setup was the inherent friction between automation tools like n8n and the Model Context Protocol. MCPs are designed for conversational agents, whereas n8n excels at interacting with traditional APIs. The introduction of MCPO (MCP-to-API) acts as the critical middle ground.

MCPO takes the MCP server config and all the tools defined within it and exposes them via a single URL as a well-documented API. This allows developers to lock the server down with an API key, adding a layer of security that raw MCP configurations lack. Each "node" in the n8n workflow is then constrained to a specific action. An HTTP request node configured to "Create Experiment" cannot accidentally "Delete Experiment" or "Start Experiment" because its functional scope is hard-coded into the workflow logic, not left to the discretion of an LLM.

Broader Impact and Industry Implications

The implications of "No-Dashboard" experimentation are profound for the CRO industry. Traditionally, the barrier to high-velocity testing has been the "developer bottleneck"—the delay between a marketing idea and the technical implementation of the test. By democratizing the creation of tests through simple forms, organizations can significantly increase their testing cadence.

However, this democratization does not come at the expense of quality. Because the "AI System" is designed by experts, every test generated follows the same naming conventions, coding standards, and safety protocols. This allows senior experimentation leads to scale their expertise across an entire department.

Furthermore, this model suggests a future where the "UI" of software becomes secondary to the "API" and "Workflow." If a team can run their entire experimentation program through a form and a Slack notification, the need for traditional, complex SaaS dashboards diminishes. This "headless" approach to software interaction allows for a more integrated, "invisible" tech stack that lives where the workers already are.

Future Outlook and Scalability

As organizations move toward 2026 and beyond, the focus will likely shift toward further refining these AI systems. Future iterations may include automated validation loops where a second AI model checks the code of the first model for errors, or integration with project management tools like Jira and Asana to automatically update tickets when a test is created.

The move toward small, sustainable models also ensures that these systems remain viable as token usage grows. By building around "small model" architecture, companies protect themselves from the fluctuating costs and potential "model collapse" of larger, general-purpose systems. The workflow approach ensures that as new, better models are released, they can be swapped into the "code generation" node without needing to rebuild the entire organizational process.

In conclusion, the transition from prompting to workflows represents the professionalization of AI in the workplace. By utilizing tools like n8n, MCPO, and Convert’s MCP server, experimentation teams can achieve a level of speed and cost-efficiency that was previously impossible, all while maintaining the rigorous control required for enterprise-level digital optimization.