Digital marketing has reached a critical juncture where the veneer of "data-driven" decision-making is being challenged by the necessity for true scientific rigor. At the heart of this transition lies A/B testing, a methodology that functions as a randomized controlled trial (RCT)—the gold standard in fields ranging from clinical pharmacology to particle physics. However, a growing consensus among data scientists and statisticians suggests that the digital marketing industry’s application of these tests has remained stagnant for decades, often relying on statistical frameworks that are nearly half a century behind modern scientific standards. The emergence of the AGILE statistical approach represents a significant effort to bridge this gap, offering a more efficient and accurate framework for conversion rate optimization (CRO) and digital experimentation.

The Statistical Crisis in Modern Marketing

The practice of A/B testing involves splitting a user base into two or more groups to compare the performance of different variables—such as website layouts, call-to-action buttons, or pricing models. By eliminating confounding variables, practitioners aim to establish a causal link between a specific change and a resulting lift in conversion rates. Despite the apparent simplicity of this process, the underlying statistics are fraught with complexities that many standard marketing tools and tutorials fail to address.

Industry experts identify three primary systemic issues currently plaguing A/B testing: the misuse of statistical significance tests, a profound lack of consideration for statistical power, and the inherent inefficiency of classical "fixed-horizon" testing models. These flaws often lead to "illusory results," where companies implement changes based on false positives or abandon potentially lucrative strategies due to false negatives.

1. The Perils of "Data Peeking" and Significance Misuse

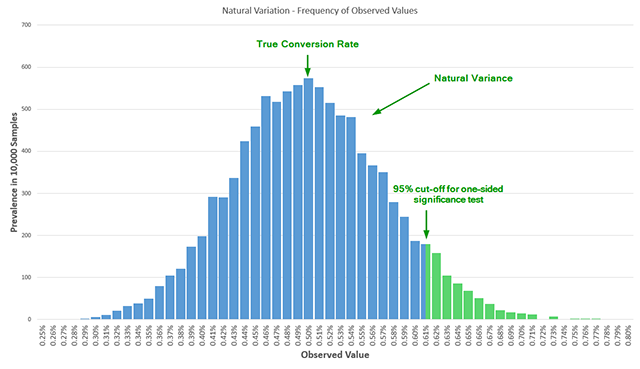

The most prevalent error in contemporary A/B testing is the violation of the "fixed-sample" assumption required by classical significance tests, such as the Student’s T-test. In a traditional scientific setting, a researcher must determine the sample size in advance and only analyze the results once that specific number of observations has been reached.

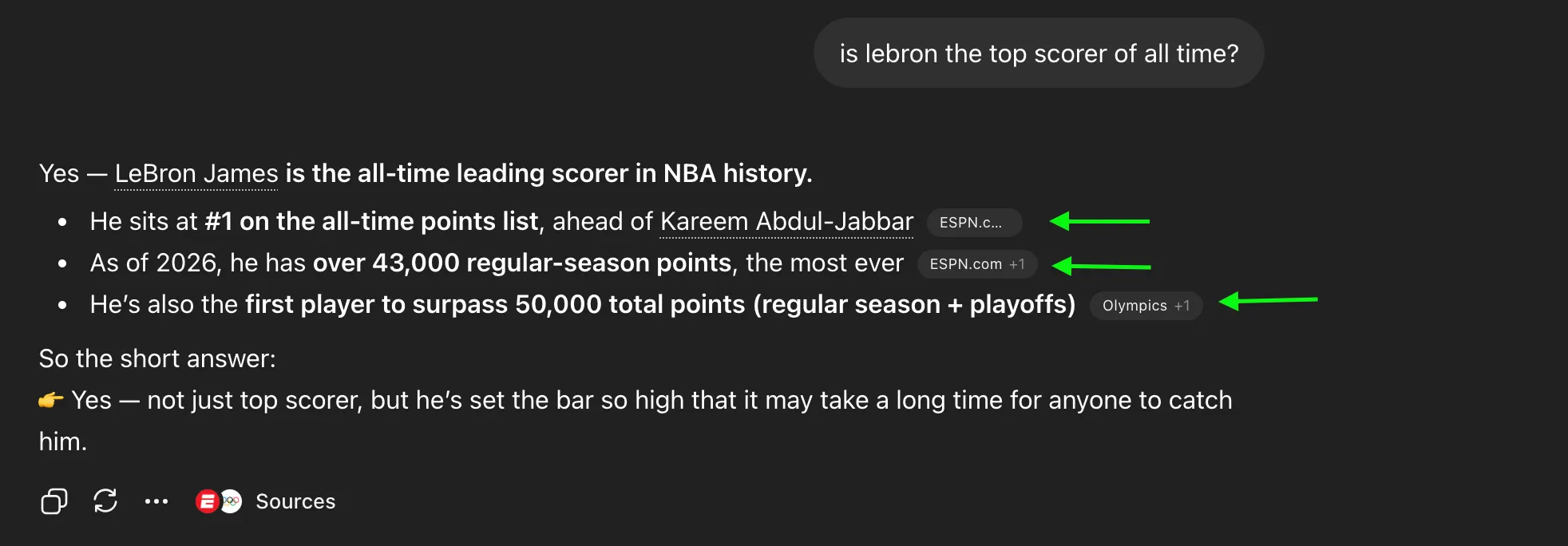

In the fast-paced environment of digital commerce, however, practitioners frequently monitor results in real-time—a practice known as "data peeking." When a marketing team sees a "95% confidence" level on day three of a fourteen-day test, there is immense pressure to stop the test early and declare a winner.

Statistically, this is catastrophic. Peeking at data multiple times without adjusting the significance threshold exponentially increases the probability of a false positive (Type I error). Research into statistical modeling confirms that peeking just five times during an experiment can more than triple the actual error rate compared to the reported nominal error. If the reported confidence is 95%, the actual confidence might be closer to 80% or lower, leading businesses to "GIGO"—Garbage In, Garbage Out.

2. The Neglected Metric: Statistical Power

While "significance" (the probability of avoiding a false positive) is a household term in marketing departments, "statistical power" remains largely ignored. Power, or sensitivity, refers to the probability that a test will detect a true effect of a certain size if it actually exists.

A test with low power is essentially a blunt instrument. If a test is underpowered, a variant that actually provides a 5% lift might be discarded because the test lacked the sensitivity to distinguish that lift from natural data variance. This results in a Type II error (false negative).

Historical analysis of conversion optimization literature reveals a startling gap: out of seven influential books on A/B testing published between 2008 and 2014, only one addressed statistical power with any depth. This neglect means that many companies are unknowingly running "coin-toss" experiments, where the chance of detecting a successful variant is no better than 50/50, regardless of the quality of the marketing hypothesis.

3. Inefficiency and the Opportunity Cost of Fixed Testing

Classical statistical methods are often rigid. They require practitioners to wait for the completion of a pre-determined sample size, even if a variant is performing so poorly that it is costing the company revenue, or so well that every day spent testing is a day of missed implementation.

In clinical trials, it is considered unethical to continue a study if a drug is clearly causing harm or if its benefits are so overwhelming that withholding it from the control group would be wrong. Digital marketing faces a parallel financial ethical dilemma. If a test is planned for 100,000 users but a 20% lift is evident after 40,000, the "fixed-horizon" approach demands the remaining 60,000 users be tested regardless, leading to significant inefficiency and delayed revenue.

The AGILE Framework: A Paradigm Shift Borrowed from Medicine

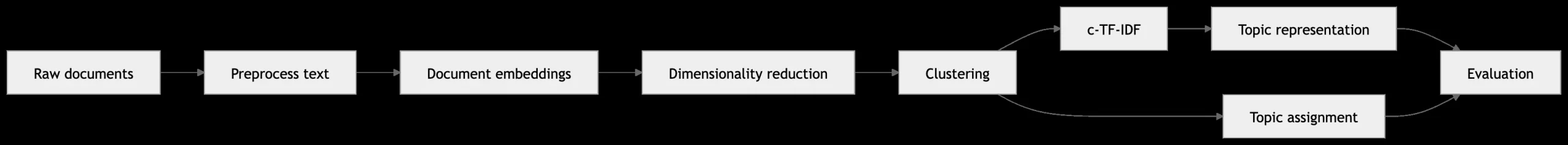

To combat these issues, the AGILE statistical approach has been proposed as a modern alternative. Drawing inspiration from sequential analysis used in medical bio-statistics, the AGILE method introduces a framework designed for the realities of the digital age.

Sequential Analysis and Error-Spending Functions

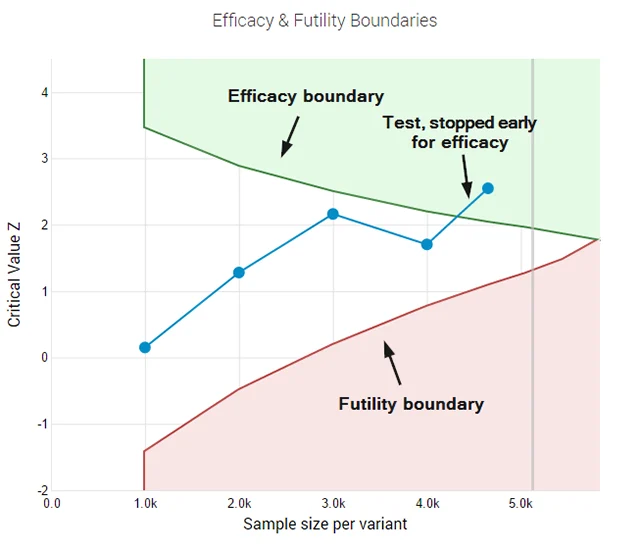

The cornerstone of the AGILE method is the use of "error-spending functions." Unlike classical tests that require a single look at the end of the experiment, error-spending allows for multiple interim analyses. It mathematically adjusts the significance threshold at each "peek" to ensure that the overall probability of a false positive remains controlled at the desired level (e.g., 5%).

This flexibility allows teams to monitor data daily or weekly without compromising the integrity of the results. If the data shows an early, overwhelming success, the test can be stopped with statistical validity.

Futility Stopping Rules

One of the most innovative aspects of the AGILE approach is the "futility" rule. In traditional testing, if a variant is underperforming, the practitioner is often told to "wait and see" until the sample size is reached. AGILE allows for a test to be abandoned early if the probability of it ever reaching statistical significance becomes negligible.

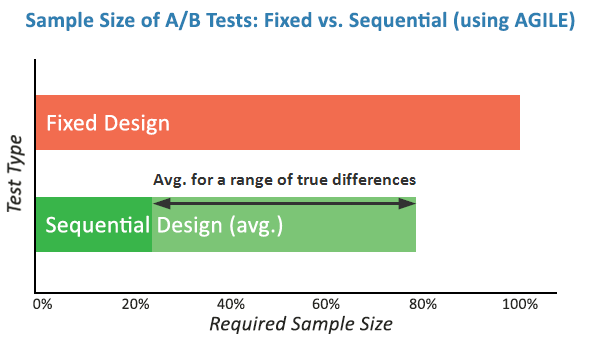

By "failing fast," organizations can reallocate resources and traffic to new, more promising hypotheses. Simulations of the AGILE method indicate that this can lead to efficiency gains of 20% to 80% in terms of sample size and time, depending on the actual magnitude of the lift being tested.

Chronology of Statistical Evolution in A/B Testing

The transition toward the AGILE method follows a clear historical trajectory:

- 1908: William Sealy Gosset publishes the Student’s T-test, providing a foundation for small-sample significance testing.

- 1930s-1950s: The Neyman-Pearson framework establishes the concepts of Type I and Type II errors, forming the "Classical" approach.

- 1969: Peter Armitage and colleagues publish seminal work on the dangers of repeated significance tests (peeking) in medical trials.

- 1990s-2000s: Clinical trials adopt group sequential designs and error-spending functions to allow for interim monitoring.

- 2010-2015: The "CRO Boom" leads to a massive increase in A/B testing, but mostly relies on simplified, often flawed, classical statistics.

- 2017-Present: The introduction of AGILE and Bayesian frameworks into marketing technology signals a move toward higher scientific standards in digital experimentation.

Supporting Data and Simulation Results

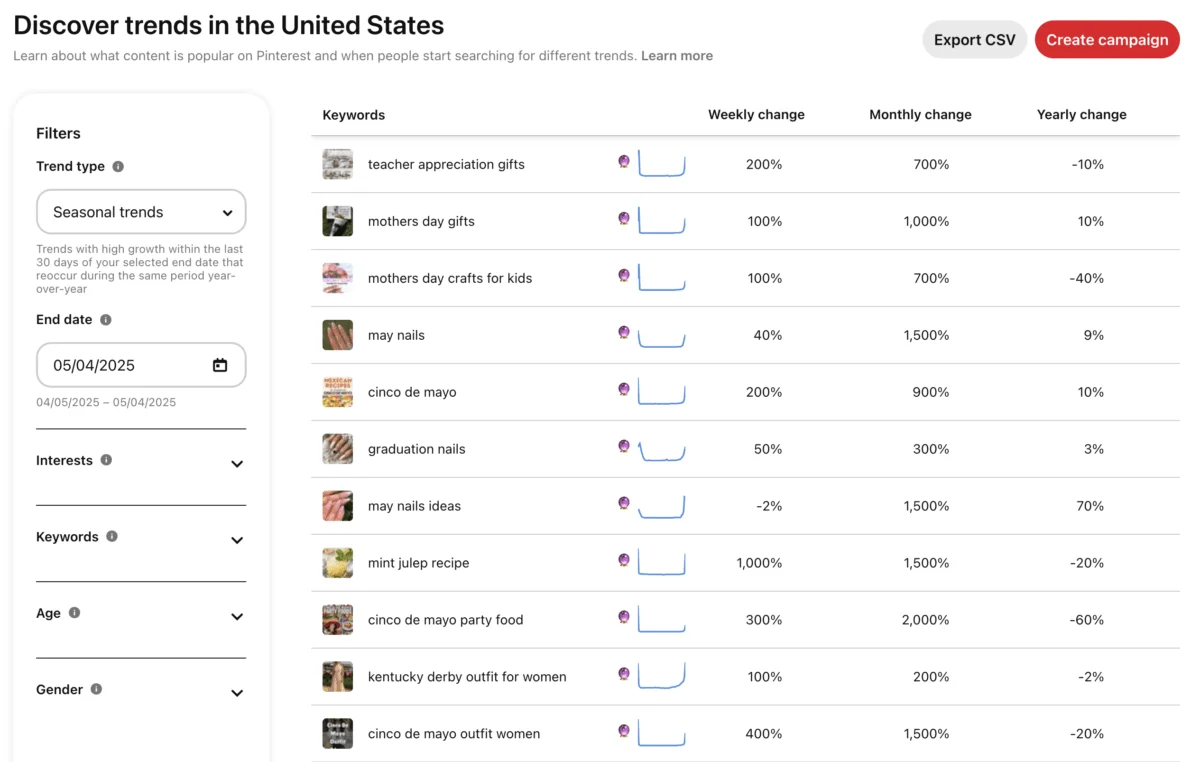

The validity of the AGILE method is supported by extensive Monte Carlo simulations. In scenarios where a true lift exists, AGILE tests consistently reach a conclusion faster than fixed-horizon tests.

For instance, consider a scenario where a baseline conversion rate is 2% and the goal is to detect a 10% relative lift with 90% power. A classical test requires 88,000 users per variant. If the actual lift is 15% (higher than expected), an AGILE test can often conclude at 40,000 users—saving 55% of the required traffic.

Conversely, if the lift is non-existent, the futility boundaries allow the test to be terminated early, preventing the "dragging on" of inconclusive experiments. While there is a slight "penalty" in the maximum possible sample size to account for the flexibility of interim looks, the average sample size across many tests is significantly lower than in the classical model.

Analysis of Implications for the Industry

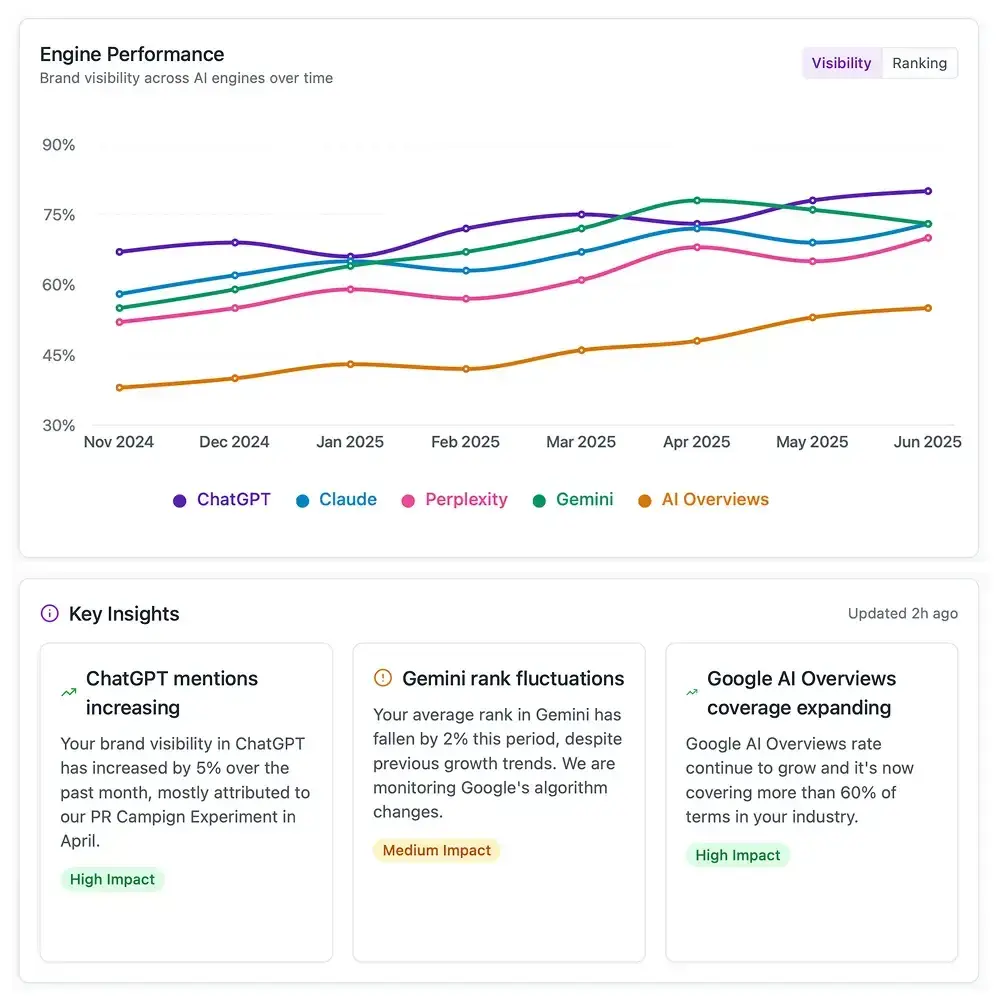

The adoption of more rigorous statistical methods like AGILE has profound implications for the structure of marketing teams and the technology they use.

1. Cultural Shift in CRO: Organizations must move away from "winning at all costs" and toward "learning with certainty." This requires educating stakeholders that a "neutral" test stopped for futility is a success, as it saves time and provides a statistically backed conclusion that a specific hypothesis does not work.

2. Tooling and Automation: Many popular A/B testing tools use "black-box" statistics. The rise of AGILE and similar frameworks is forcing software providers to be more transparent about their algorithms. We are seeing a shift toward tools that allow for custom parameter setting—allowing users to define their own power, significance, and error-spending profiles.

3. Financial Impact: For high-traffic enterprises, the efficiency gains of AGILE translate directly to the bottom line. Reducing the time-to-market for a winning website variation by even one week can result in hundreds of thousands of dollars in incremental revenue.

Conclusion

As digital landscapes become increasingly competitive, the margin for error in experimentation continues to shrink. The AGILE statistical approach represents more than just a technical upgrade; it is a fundamental maturation of the digital marketing discipline. By embracing the rigor of clinical science and the flexibility of sequential analysis, practitioners can finally move past the "Garbage In, Garbage Out" era and enter a period of true, evidence-based growth. The transition from classical fixed-horizon testing to more dynamic, agile models is no longer a luxury for the elite tech giants—it is a necessity for any business that seeks to rely on data in an uncertain world.