LinkedIn, the world’s largest professional network, has launched an innovative new tool called Crosscheck, designed to empower its Premium members in the U.S. to meticulously evaluate and compare the latest artificial intelligence models from various leading providers. This platform aims to address the growing challenge professionals face in navigating the rapidly expanding AI landscape, offering a unique "taste test" mechanism to identify the most suitable AI tool for their specific needs while simultaneously providing invaluable, anonymized feedback to AI developers for model improvement.

The Genesis of Crosscheck: Navigating the AI Proliferation

The rapid ascent of generative AI, epitomized by breakthroughs like OpenAI’s ChatGPT and Google’s Gemini, has ushered in an era of unprecedented technological transformation. Businesses and individual professionals are increasingly integrating AI into their workflows, from content generation and data analysis to coding and strategic planning. However, this proliferation of AI models, each with its unique strengths, weaknesses, and specialized applications, presents a significant dilemma: how does one choose the optimal tool from a crowded and constantly evolving market?

LinkedIn’s Crosscheck emerges as a direct response to this challenge. Developed by LinkedIn Labs, the initiative seeks to demystify the selection process by offering a centralized, unbiased (to the extent possible through blind testing) environment for model comparison. The platform’s core philosophy is rooted in practical utility: enabling users to experience the outputs of different AI models firsthand, under identical prompt conditions, and then provide structured feedback based on real-world application. This approach positions Crosscheck not just as a comparison tool, but as a critical feedback loop accelerating the refinement and user-centric development of AI technologies. The concept of "taste testing" underscores the subjective yet critical evaluation required to match an AI tool’s capabilities with a user’s specific professional context and desired outcomes.

How Crosscheck Works: A User’s Journey Through Blind AI Evaluation

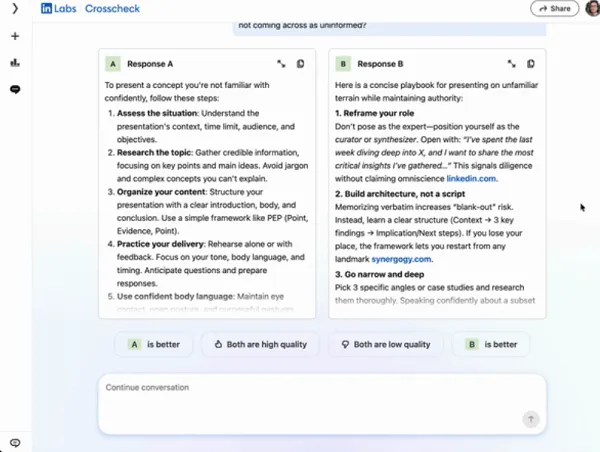

For LinkedIn Premium members in the U.S., accessing Crosscheck initiates a streamlined process designed for efficiency and insight. Upon entering the platform, users are prompted to submit any query or task they wish an AI model to perform. This prompt serves as the common denominator for the subsequent evaluation. Crosscheck then leverages its access to a diverse array of AI models from prominent providers such as OpenAI, Anthropic, Google, and Microsoft, among others.

Crucially, the system presents two distinct responses generated by different AI tools, without revealing the identity of the underlying models. This "blind" testing methodology is a cornerstone of Crosscheck’s design, aiming to eliminate pre-conceived notions or brand biases that might otherwise influence a user’s judgment. After reviewing the two anonymized outputs, users are asked to rate their quality based on criteria such as relevance, accuracy, coherence, and utility. This rating system provides granular data that directly informs the efficacy of each model for various types of queries.

The feedback mechanism extends beyond simple ratings. LinkedIn aggregates these user conversations and evaluations, then shares this anonymized, consolidated data directly with the respective AI model developers. This direct conduit of user experience data is invaluable for developers, offering real-world insights into how their models perform across a wide spectrum of professional use cases. It allows them to identify areas for improvement, refine algorithms, and better tailor their offerings to market demands, thereby fostering a continuous cycle of innovation and enhancement within the AI ecosystem.

The Participating AI Ecosystem: A Nexus of Leading Generative AI Providers

The strength of Crosscheck lies in its ability to draw from a robust and diverse pool of AI models. The inclusion of industry giants like OpenAI (creators of the widely acclaimed GPT series), Anthropic (known for their safety-focused Claude models), Google (developer of Gemini and its predecessors), and Microsoft (with its burgeoning Azure AI capabilities and Copilot integrations) ensures that users gain exposure to a broad spectrum of cutting-edge AI technologies. This comprehensive access is a significant advantage, as professionals often lack the resources or technical expertise to independently test and compare multiple sophisticated models.

OpenAI, backed by billions in investment from Microsoft, has been at the forefront of the generative AI revolution, setting benchmarks for natural language understanding and generation. Anthropic, founded by former OpenAI researchers, has distinguished itself with a strong emphasis on AI safety and constitutional AI principles. Google, a long-standing pioneer in AI research, brings its vast computational power and deep expertise in search and data processing to the table. Microsoft, beyond its investment in OpenAI, is aggressively developing its proprietary AI models and integrating AI across its extensive suite of enterprise products, from Microsoft 365 to Dynamics 365. The presence of these key players, alongside other emerging innovators, transforms Crosscheck into a veritable sandbox for AI exploration and competitive evaluation.

Leaderboards and Performance Metrics: Guiding Professional Choice

A standout feature of Crosscheck is its implementation of leaderboards. These dynamic rankings showcase the top-performing AI tools for various query types and industry verticals based on aggregated user ratings. For instance, a professional in marketing might find a leaderboard highlighting the best AI models for copywriting or content ideation, while a software developer might discover top performers for code generation or debugging assistance.

These leaderboards serve multiple critical functions. Firstly, they provide transparent, data-driven recommendations, helping users quickly identify models that have demonstrated superior performance in contexts relevant to their work. This can significantly reduce the time and effort traditionally required to research and test multiple AI solutions. Secondly, the leaderboards foster a healthy competitive environment among AI developers, incentivizing them to continuously improve their models to rank higher and gain visibility among a coveted professional user base. Thirdly, for LinkedIn itself, these insights can be leveraged to guide its users towards the most effective AI tools, potentially shaping professional best practices and skill development pathways within its community. The granular data derived from user interactions and leaderboard performance could also inform LinkedIn’s own future AI-driven features and partnerships.

Strategic Alignment: LinkedIn’s Broader AI Vision

Crosscheck is not an isolated initiative but a strategic cornerstone within LinkedIn’s broader vision to embed AI deeply into the professional development journey of its 1 billion-plus members. The platform has been actively promoting the adoption of AI skills, recognizing that proficiency in AI will be a critical differentiator in the evolving job market. Indeed, the prevalent mantra circulating on LinkedIn — "AI isn’t going to take your job, but someone who uses AI will" — underscores the urgency and importance of developing AI literacy.

LinkedIn has already integrated AI into various aspects of its platform, from personalized job recommendations and content suggestions to resume optimization and interview preparation tools. Crosscheck extends this commitment by providing a practical, hands-on learning environment. By allowing professionals to experiment with different AI models, LinkedIn aims to accelerate their understanding of AI’s capabilities and limitations, thereby fostering a more AI-fluent workforce. This initiative aligns with LinkedIn’s mission to connect the world’s professionals to make them more productive and successful, leveraging AI as a powerful enabler of that mission. The platform has also seen a surge in AI-related courses and certifications, reflecting a strong demand from its user base for upskilling in this domain.

The Microsoft-OpenAI Dynamic: Navigating Potential Influence

The financial and strategic ties between LinkedIn’s parent company, Microsoft, and OpenAI present an interesting dynamic within the Crosscheck ecosystem. Microsoft has invested billions into OpenAI, securing extensive rights to integrate OpenAI’s groundbreaking models into its own suite of products, including Azure OpenAI Service and Microsoft 365 Copilot. Simultaneously, Microsoft is also vigorously developing its proprietary AI models and research capabilities to reduce its reliance on any single provider, fostering an internal competitive environment.

This dual strategy raises questions about potential biases in a platform like Crosscheck. While the blind testing methodology is designed to ensure objectivity, the sheer scale of Microsoft’s investment and its strategic partnership with OpenAI might lead some to infer a leaning towards OpenAI’s tools. However, LinkedIn explicitly states that the leaderboards are "fairly diverse" and reflect a broad range of AI models, suggesting that performance, as rated by users, is the primary determinant. The transparency of the leaderboards, which are based on collective user feedback rather than editorial discretion, is intended to mitigate any perceived favoritism. Furthermore, the inclusion of formidable competitors like Anthropic and Google ensures a robust comparative environment, where meritocratic performance should ultimately prevail. This aspect of Crosscheck will be closely watched by industry analysts, particularly as the platform expands and gathers more data.

Broader Industry Context: The Promise vs. Reality of AI Productivity

While the enthusiasm for AI’s transformative potential is palpable across professional networks like LinkedIn, empirical data on its immediate impact on productivity offers a more nuanced picture. A recent study published by the National Bureau of Economic Research (NBER) provides a pertinent counterpoint to the widespread optimism. Surveying 6,000 business executives across the U.S., U.K., Germany, and Australia, the study revealed that a significant 89% of respondents reported virtually no change in labor productivity over the past three years, despite widespread adoption of AI technologies within their organizations. While modest gains are projected for the future, the research highlights a current gap between the projected benefits of AI and the tangible reality of improved efficiency.

This finding suggests that the mere adoption of AI tools does not automatically translate into productivity boosts. Effective integration, proper training, and a deep understanding of how to leverage AI for specific tasks are crucial. This is precisely where a tool like Crosscheck can play a pivotal role. By helping professionals identify the most effective AI tools for their specific needs and providing a feedback loop for developers to improve those tools, Crosscheck could indirectly contribute to bridging this productivity gap. It moves beyond generic AI adoption to strategic, informed AI utilization. The challenge lies not just in having AI, but in using the right AI, correctly, to achieve measurable outcomes. As businesses continue to invest heavily in AI, platforms that facilitate intelligent selection and application will become increasingly vital in realizing the promised efficiencies and innovations. Future studies will undoubtedly monitor whether such platforms can indeed correlate with demonstrable gains in productivity.

Expert Reactions and Future Outlook

Industry analysts anticipate a positive reception for Crosscheck, particularly from AI developers eager for structured, real-world user feedback. Such a platform could become a significant source of data for fine-tuning models, identifying unforeseen use cases, and understanding regional or industry-specific performance variations. For professionals, the value proposition of saving time and making informed decisions about AI tool investments is substantial. Early adopters among LinkedIn Premium members are expected to appreciate the curated and comparative experience, which removes much of the guesswork from AI selection.

Looking ahead, Crosscheck could evolve to include more granular comparison metrics, perhaps incorporating ethical AI considerations, data privacy features, or even cost-effectiveness analyses for enterprise-level models. The platform might also expand its geographical reach and integrate more specialized AI categories beyond general-purpose large language models, such as AI for design, data science, or cybersecurity. The aggregation of user-generated performance data could also become a valuable asset for LinkedIn, potentially leading to new services, partnerships, or even its own proprietary AI benchmarking tools. The success of Crosscheck will ultimately depend on its ability to maintain neutrality, continuously expand its model access, and effectively translate user feedback into actionable insights for the broader AI development community, thereby solidifying LinkedIn’s position at the nexus of professional development and technological innovation.

Conclusion

LinkedIn’s Crosscheck represents a significant step forward in democratizing access to and understanding of the burgeoning AI landscape. By offering a structured, blind testing environment for evaluating diverse AI models, the platform empowers professionals to make informed decisions about the tools best suited for their specific requirements. Simultaneously, it establishes a crucial feedback mechanism that promises to accelerate the refinement and improvement of AI technologies across the industry. As the professional world grapples with the transformative potential and practical challenges of AI integration, Crosscheck positions LinkedIn as a vital guide, helping its members not just to adapt to the AI shift, but to actively shape its trajectory towards more productive and impactful applications.