The landscape of digital experimentation has undergone a radical transformation as of 2026, marked by the total commoditization of test execution through generative artificial intelligence and automated workflows. In an era where AI can generate hypotheses, write front-end code for variants, and summarize complex statistical results in seconds, the traditional barriers to entry for A/B testing have effectively collapsed. However, industry experts warn that this ease of use has created a paradox: while more tests are being run than ever before, the value derived from them depends entirely on human-led rigor and disciplined decision-making. The competitive edge in the modern market no longer belongs to those who can test the fastest, but to those who can build a process that teams can consistently trust.

The Shift from Tactical Growth to Everyday Necessity

In the early 2020s, A/B testing was often viewed as a specialized growth tactic reserved for high-traffic e-commerce sites or dedicated optimization teams. By 2026, the fragmentation of customer journeys across dozens of touchpoints has forced a shift. Marketing systems are now built on frameworks like HubSpot’s Loop, where experimentation is embedded directly into the CRM and content management systems. This integration reflects a broader industry trend where "always-on" experimentation is the standard for maintaining relevance in a hyper-competitive digital economy.

The transition has been fueled by the declining cost of execution. Historically, running a single A/B test required significant coordination between data analysts, designers, and developers. In the current environment, AI-driven tools have reduced the "cost per experiment" to near zero. Yet, this efficiency has highlighted the "Garbage In, Garbage Out" (GIGO) effect. When inputs are weak—such as hypotheses based on shallow AI-generated personas rather than real customer data—the resulting optimizations often target non-existent audiences or solve problems that do not impact the bottom line.

A Chronology of Experimentation: From Buttons to Ecosystems

To understand the 2026 landscape, one must look at the progression of the field over the last decade. Between 2015 and 2020, the industry focused on "low-hanging fruit," primarily cosmetic changes like button colors and headline tweaks. The period between 2021 and 2024 saw the rise of full-stack experimentation, where companies began testing deeper product features and pricing models.

By 2025, the integration of Large Language Models (LLMs) into testing platforms allowed for the rapid generation of copy and design variants. Now, in 2026, the focus has shifted toward "triangulation"—the synthesis of quantitative data, qualitative insights, and heuristic evaluations to ensure that experiments are grounded in reality. The focus is no longer just on "winning" a test, but on understanding the "why" behind user behavior to create repeatable success.

The ALARM Protocol: A Framework for Modern Rigor

As the volume of experiments increases, the risk of data corruption and strategic drift grows. To combat this, many organizations have adopted the ALARM protocol, a framework developed by GAIN Conversion to pressure-test experiment quality before a single line of code is deployed.

The protocol breaks down into five critical pillars:

- Alternative Executions: Instead of settling for the first solution an AI suggests, teams are encouraged to explore multiple ways to test a single hypothesis. This ensures the execution isn’t the reason a good idea fails.

- Loss Factors: This stage involves a pre-mortem analysis to identify why an experiment might fail—such as low visibility of the variant or conflicting site elements—allowing teams to remove friction before launch.

- Audience and Area: Precision in targeting is vital. Modern testing requires defining exactly who sees the experiment and where, preventing the dilution of results that occurs when site-wide changes are applied to niche user segments.

- Rigor: This pillar applies psychological principles and data-driven insights to strengthen the experiment. It ensures that changes are not just random variations but are rooted in proven behavioral drivers like social proof or cognitive load reduction.

- MDE & MVE (Minimum Detectable Effect and Minimum Viable Experiment): This focuses on the smallest improvement worth detecting and the simplest version of the test required to gather data. This prevents "over-engineering" experiments that may not yield significant business value.

Statistical Foundations: Frequentist vs. Bayesian Models

The debate between Frequentist and Bayesian statistics remains a central theme in 2026. Frequentist methodology, the traditional backbone of randomized controlled trials, relies on fixed sample sizes and significance thresholds (p-values). It is prized for its ability to de-risk decisions through a robust, if sometimes unintuitive, framework. However, it is prone to "peeking"—the act of checking results early and stopping a test prematurely—which can lead to false positives.

In contrast, Bayesian statistics offers a probabilistic approach that updates as data flows in. It asks, "Given the current data, what is the probability that Variation A is better than Variation B?" While more intuitive for stakeholders, Bayesian models require careful handling to avoid overstating causality in the early stages of a test. The consensus among lead experimenters, including Marcella Sullivan, is that the choice of framework matters less than the consistency with which it is applied.

Data Triangulation and Defensible Insights

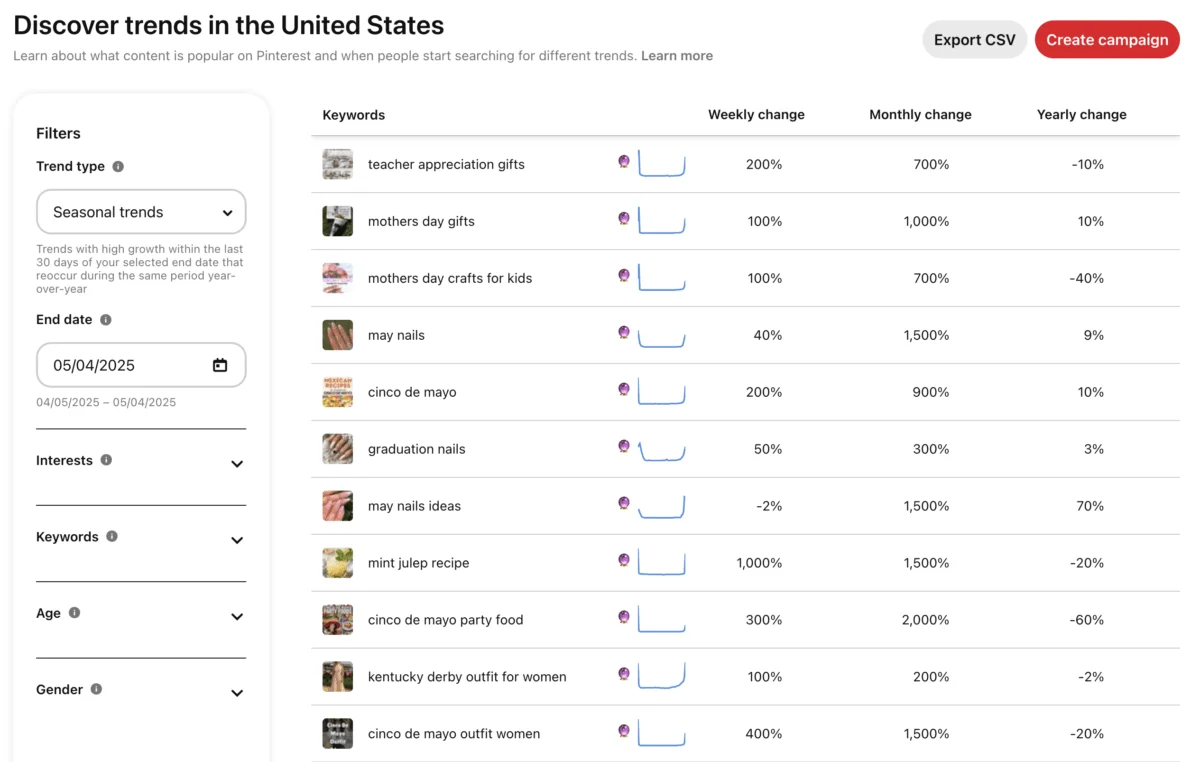

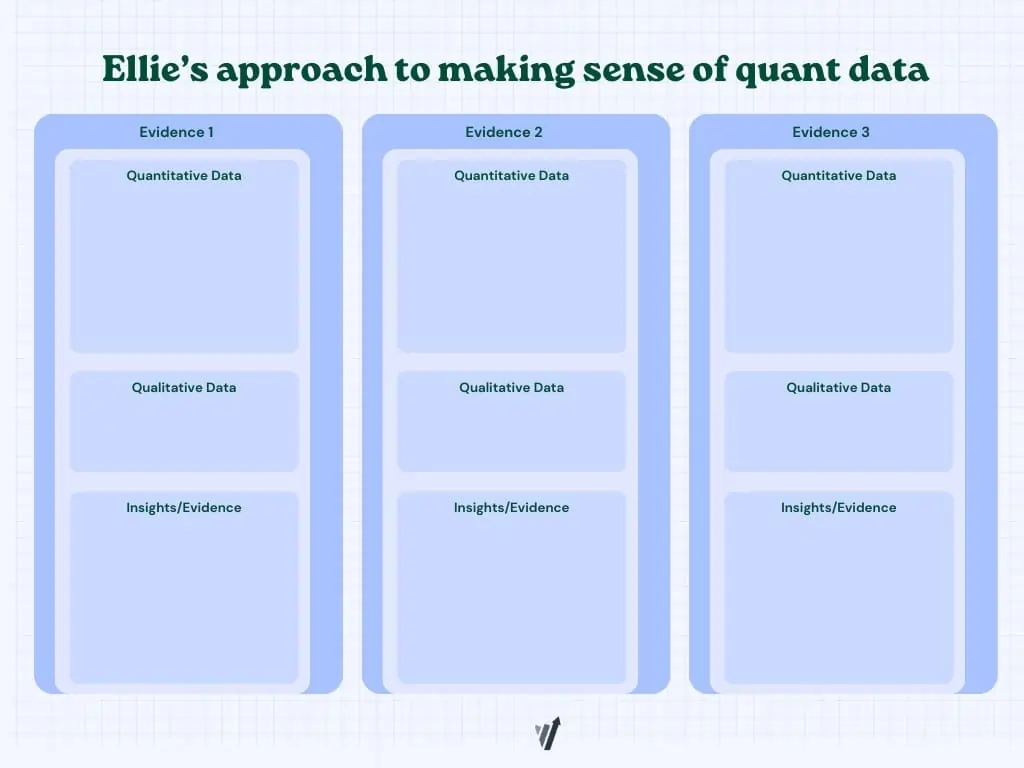

One of the most significant advancements in 2026 is the move toward data triangulation. Ellie Hughes, Head of Consulting at Eclipse Group, advocates for a "quantitative approach to qualitative data." This involves pairing heatmaps, session recordings, and customer interviews with hard conversion metrics.

For example, if quantitative data shows a drop-off at the checkout page, but qualitative session recordings show users struggling with a specific form field, the resulting hypothesis is significantly stronger than one based on a single data source. Triangulation ensures that insights are "defensible"—meaning they can withstand scrutiny from executives and provide a clear roadmap for implementation.

The Role of Generative AI: Support, Not Replacement

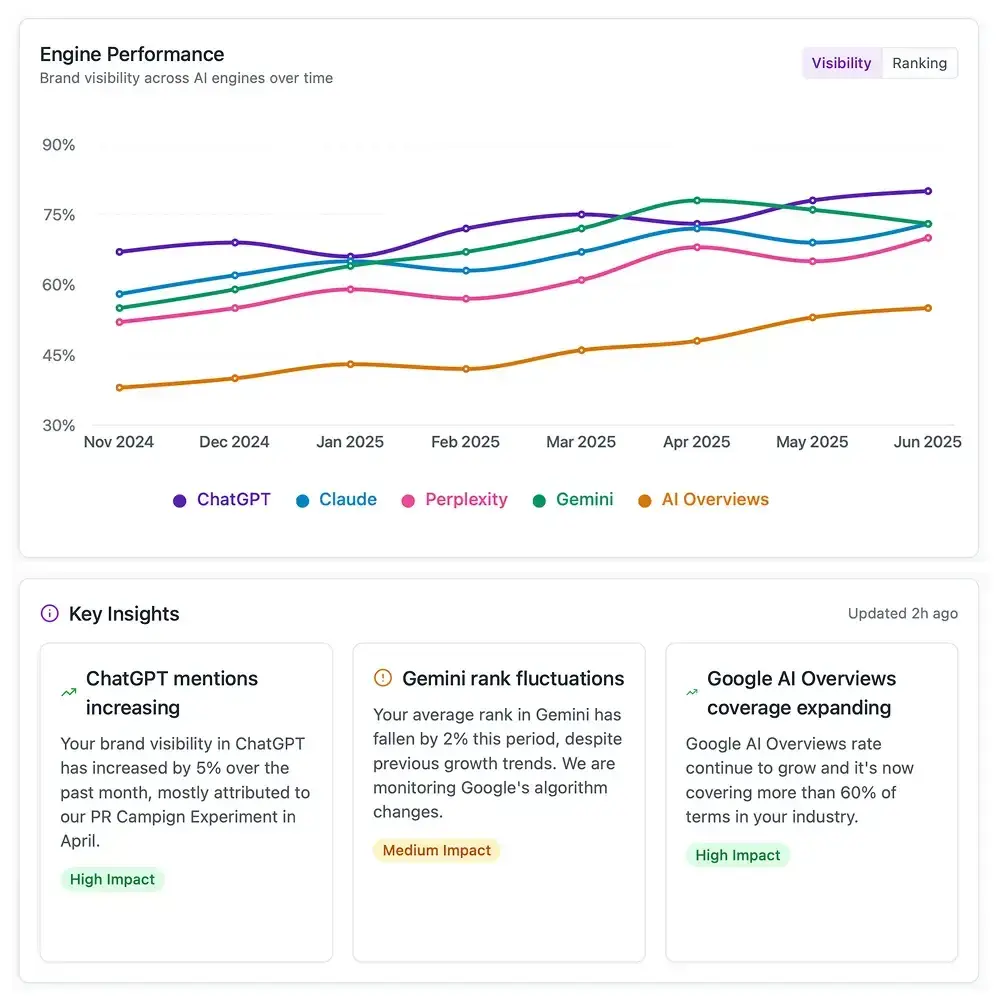

A recent survey of experimenters found that 83.3% use generative AI tools like ChatGPT or Claude on a daily basis. The primary use cases include analyzing large datasets, coding support, and low-fidelity prototyping. However, the industry remains wary of "AI hallucinations"—instances where models invent patterns or insights that are not supported by the raw data.

To mitigate these risks, the "human-in-the-loop" model has become standard. While AI can draft 50 different headline variations or surface anomalies in traffic data, human judgment is required to declare a winner and, more importantly, to interpret what a "loss" means for the brand’s long-term strategy. The best experimentation platforms in 2026 are those that prioritize transparency and provide access to raw data, allowing humans to verify AI-generated summaries.

Common Pitfalls and the High Cost of Poor Testing

Despite technological advances, certain errors continue to plague experimentation programs. The most common mistakes identified in 2026 include:

- Running Underpowered Tests: Attempting to detect a 1% lift on a page with low traffic leads to inconclusive results and wasted resources.

- Lack of Documentation: Failing to record why a test was run and what was learned leads to "institutional amnesia," where teams repeat the same failed experiments every two years.

- Ignoring Guardrail Metrics: Optimizing for a primary metric (like clicks) while ignoring a secondary metric (like return rate) can lead to short-term wins that cause long-term revenue damage.

- The "Winner" Bias: Shipping a variant that showed a marginal lift without considering the technical debt or implementation cost required to maintain it.

Broader Impact and the Outlook for 2027

As we look toward 2027, the DNA of experimentation is shifting toward server-side testing and deeper personalization. The ability to run experiments across devices—tracking a user from a mobile ad to a desktop purchase—is becoming the new frontier. Furthermore, as privacy regulations tighten, the reliance on first-party data and robust internal experimentation stacks will only increase.

The ultimate implication of the 2026 experimentation landscape is that the "technical" side of A/B testing has been solved. The challenge is now cultural. Organizations that succeed will be those that foster a culture of curiosity, where every "loss" is viewed as a valuable data point and every "win" is scrutinized for its practical significance. In the age of AI, the most valuable asset a company has is not its testing tool, but its ability to think critically about the data that tool produces.