Digital marketing and product development teams are increasingly finding themselves at a strategic crossroads as the traditional reliance on simple A/B testing begins to yield diminishing returns. While the practice of comparing two versions of a webpage or app element remains a cornerstone of conversion rate optimization (CRO), industry analysts and data scientists warn that an over-reliance on this single methodology is creating a "maturity plateau." As organizations strive for deeper growth, the conversation is shifting from "which version won" to a more complex understanding of user behavior, long-term business impact, and the integration of diverse experimental frameworks.

The Institutionalization of the A/B Testing Default

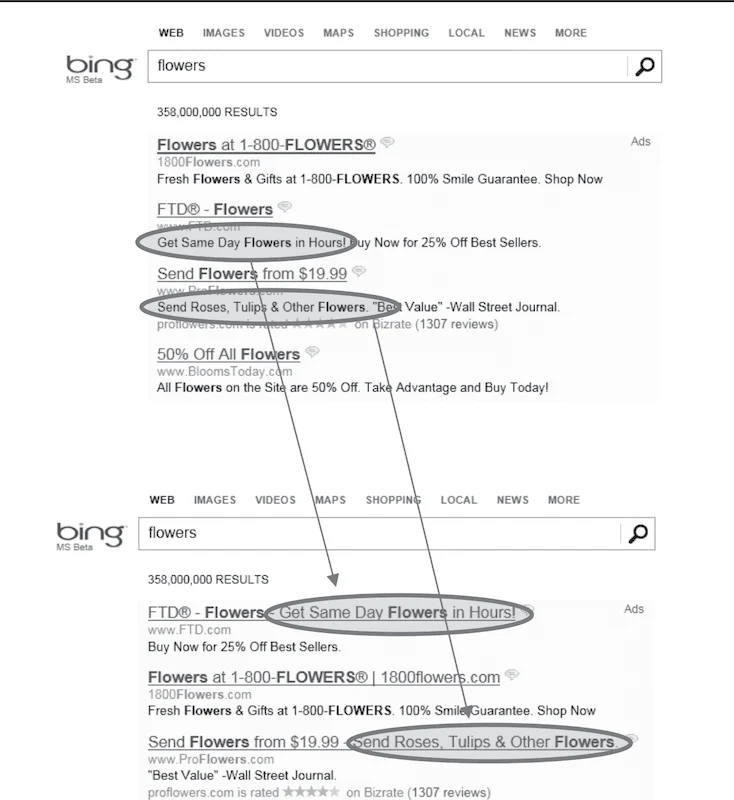

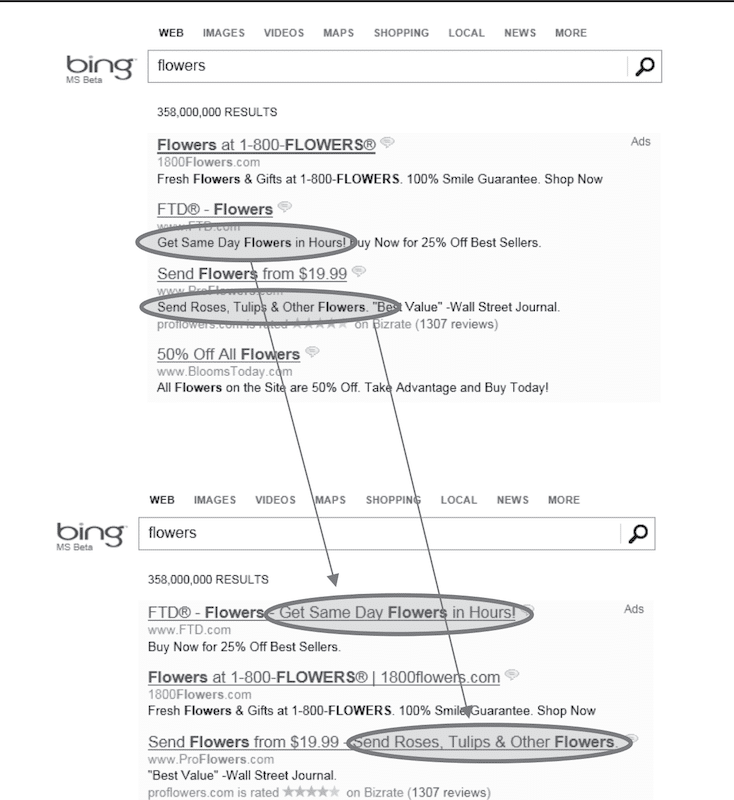

The ascent of A/B testing to its current status as the default decision-making tool began in the early 2010s, fueled by the success of major tech entities. Microsoft’s Bing team provides one of the most cited examples of the methodology’s potential. By testing the merging of two ad title lines into a single, longer headline, the team identified a change that generated more than $100 million in additional annual revenue. Such high-profile successes catalyzed a cultural shift across the tech sector, leading to the normalization of experimentation as a validation tool for even the most minor user interface (UI) adjustments. Today, Microsoft reportedly conducts more than 20,000 controlled experiments annually across its Bing platform alone.

The proliferation of experimentation platforms, such as FigPii, Optimizely, and VWO, further lowered the barrier to entry. These tools made it possible for marketing and product managers to launch tests without requiring deep expertise in statistics or data engineering. However, this accessibility has led to a lopsided ecosystem. Industry data suggests that approximately 77% of all digital experiments remain simple A/B tests involving only two variants. This preference for simplicity persists despite evidence that multivariate or multi-treatment designs often provide more nuanced insights into complex user interactions.

The Statistical Reality: The Problem of Power and Traffic

A primary challenge facing modern e-commerce brands is the lack of statistical power necessary to run reliable A/B tests. For a test to be valid, it requires a large enough sample size to distinguish a genuine behavioral shift from random statistical noise. When a team seeks to detect a modest improvement, such as a 1% or 2% lift in conversions, the mathematical requirement often exceeds hundreds of thousands of visitors per variant.

For the vast majority of e-commerce sites—even those recording one to two million sessions per month—achieving this level of certainty can take six to twelve weeks. This delay creates a "velocity trap" where teams are forced to choose between three suboptimal paths: running tests for months and slowing down innovation, ending tests early and making decisions based on "underpowered" (and likely incorrect) data, or focusing exclusively on massive changes that are risky and difficult to implement.

Furthermore, analysts point out that A/B tests are inherently designed to answer narrow, micro-level questions. While they are effective at determining which button color or headline copy performs better in the immediate term, they are fundamentally ill-equipped to address systemic business questions. Issues such as brand trust, pricing elasticity, navigation architecture, and product-market fit cannot be solved by isolating a single variable on a single page.

The Blind Spots of Data: Survivorship Bias in the Funnel

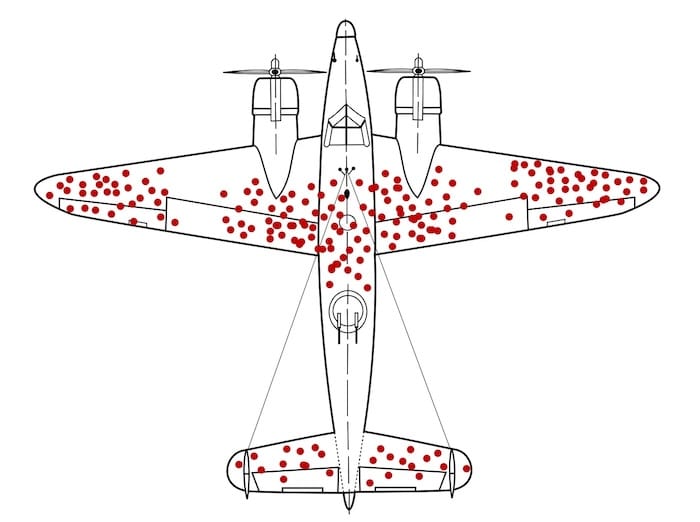

One of the most significant criticisms of modern A/B testing is its tendency to focus on what happened rather than why it happened. In the absence of qualitative context, teams often fall victim to survivorship bias—a concept famously illustrated during World War II by statistician Abraham Wald. When the military analyzed returning aircraft to determine where to add armor, they initially focused on the areas riddled with bullet holes. Wald pointed out that they were only seeing the planes that survived; the planes hit in the engines never returned.

In the digital funnel, A/B tests provide data only on the "survivors"—the users who completed the journey. They offer little to no visibility into the users who dropped off. A variant might "win" not because it improved the user experience, but because it was the least confusing of two flawed options. Without pairing quantitative test results with qualitative tools like session recordings, heatmaps, and user feedback, organizations risk optimizing "bullet holes" while ignoring the structural vulnerabilities that cause the majority of their traffic to exit the funnel.

The Conflict Between Short-Term Uplift and Long-Term Value

A growing concern among high-maturity CRO teams is the misalignment between short-term test "wins" and long-term business health. A/B tests typically measure immediate actions: clicks, add-to-cart rates, or same-session purchases. However, these metrics can be deceptive.

A classic example used in behavioral economics is the "Jam Experiment" regarding the paradox of choice. While offering a wide variety of options (24 flavors of jam) might increase initial engagement and "clicks" compared to a smaller selection (6 flavors), the smaller selection actually resulted in a tenfold increase in actual purchases. In a standard A/B test focused on click-through rates, the 24-flavor variant would be declared the winner, despite being detrimental to the bottom line.

Similarly, aggressive tactics like countdown timers or intrusive pop-ups may secure a short-term conversion lift but can damage brand equity, reduce customer lifetime value (LTV), and increase return rates. High-maturity organizations are now shifting their primary metrics away from simple conversion rates toward profit per visitor, retention rates, and long-term customer loyalty.

The Methodology of High-Maturity Teams

To overcome the limitations of standard A/B testing, leading organizations are adopting a broader "experimentation toolkit." This transition involves moving beyond binary tests to more sophisticated designs:

- Sequential Testing and Holdout Groups: These allow teams to measure the cumulative effect of multiple changes over a longer period, ensuring that short-term gains do not evaporate over time.

- Switchback Tests: Used frequently by marketplaces and logistics companies (like Uber or DoorDash), these tests alternate treatments over time windows across a whole system to account for network effects and user interactions.

- Quasi-Experiments: When a randomized controlled trial is impossible or unethical (such as changing prices for only half of a customer base), teams use quasi-experiments to compare different geographical markets or time periods.

- Evidence-Led Hypotheses: Rather than testing "random ideas," mature teams follow a rigorous research phase. A high-quality hypothesis is now expected to follow a specific structure: "Because [research evidence], we believe [user problem], so we will [change], and expect [metric] to improve."

Implications for the Future of E-commerce

The shift away from "A/B testing for everything" represents a professionalization of the CRO industry. Analysts suggest that the next phase of digital growth will be defined by "Big Lever" testing. Instead of polishing minor UI elements, teams are focusing on the core drivers of human decision-making: message clarity, value proposition, and trust signals.

For instance, rather than testing the color of a "Buy Now" button, a mature team might test the entire information architecture of a product page—reordering how technical specs, social proof, and shipping information are presented based on user anxiety points identified in support tickets.

The broader implication for the business world is a move toward "Trustworthy Online Controlled Experiments." As outlined by experts like Kohavi, Tang, and Xu, the goal of experimentation is no longer just to find a "winner," but to build a reliable knowledge base that informs long-term corporate strategy.

Conclusion: Building a Resilient Experimentation Program

The consensus among industry leaders is that A/B testing is a vital tool, but it cannot be the entire toolbox. To build a resilient experimentation program, organizations must invest in qualitative research, acknowledge the limitations of their traffic levels, and align their experiments with actual business outcomes rather than vanity metrics.

As digital competition intensifies and the cost of customer acquisition continues to rise, the ability to diagnose "why" a user behaves a certain way will become more valuable than the ability to run a "what" test. The organizations that thrive will be those that view experimentation not as a series of isolated tactical wins, but as a holistic strategy for understanding and serving the customer journey. For many, this means the era of the simple A/B test is ending, and the era of comprehensive experimentation is just beginning.